AI Task Decomposition in 2026: How Atomic Steps Beat One-Shot Prompting

One-shot prompts fail on anything past 200 lines. AI task decomposition splits complex work into atomic steps with pass/fail checks, so Claude iterates until every subtask passes. Worked examples for code, research, and planning.

Introduction

Carnegie Mellon data shows AI agent success rates jump from 22% to 89% when tasks are decomposed with explicit pass/fail criteria -- making decomposition the single highest-leverage skill for Claude Code users.

You ask Claude Code to build a full-stack web application. You give it a detailed prompt describing the features, the tech stack, and the desired outcome. It starts coding enthusiastically. An hour later, it's stuck. It's generated hundreds of lines of code, but the authentication flow is broken, the database schema has inconsistencies, and the frontend components don't connect to the API. The AI is lost in its own creation, unable to diagnose the root cause because the problem was never broken down into testable parts. This is the single-prompt wall, and it's the primary reason ambitious projects with AI assistants fail.

This frustration is exactly what developers are reporting in forums as of March 2026. The promise of autonomous AI coding modes is colliding with the reality of task ambiguity. The core issue isn't the AI's capability; it's the methodology. Asking an AI to "build an app" is like asking a new developer to do the same without a project plan, user stories, or unit tests. It's a recipe for confusion.

The solution is a concept borrowed from computer science and project management, now essential for AI collaboration: AI task decomposition. This is the process of taking a complex, high-level goal and systematically breaking it into a sequence of smaller, atomic tasks. Each atomic task has a single, clear objective and, critically, a verifiable pass/fail criterion. This structure transforms an ambiguous directive into a solvable workflow, allowing AI agents like Claude Code to execute, self-assess, and iterate with precision. It's the difference between shouting a destination into the wind and programming a GPS with turn-by-turn navigation.

What Is AI Task Decomposition?

Task decomposition breaks a complex goal into atomic, independently verifiable steps -- the structured input format that Claude, GPT-4, GitHub Copilot, and Cursor need for reliable autonomous execution.

At its heart, AI task decomposition is a structured thinking framework. It's the act of defining a hierarchy of work where the top node is your ultimate goal, and the leaf nodes are the smallest units of work that can be independently executed and validated by an AI. The term "atomic" is key here—borrowed from chemistry, it means indivisible. An atomic task cannot be broken down further without losing its meaning or the ability to be verified.

Think of it as creating a detailed recipe instead of just naming a dish. "Bake a cake" is the goal. The decomposed atomic tasks are: "1. Preheat oven to 350°F (verify oven display reads 350). 2. Grease a 9-inch pan (verify pan surface is fully coated). 3. Mix flour, sugar, baking powder in Bowl A (verify no dry ingredient lumps remain)." Each step has a clear action and a way to check if it was done correctly.

For AI agents, particularly in coding, this structure is non-negotiable for reliability. A study on autonomous software engineering agents, highlighted in a 2025 arXiv preprint from Carnegie Mellon, found that success rates on complex projects jumped from 22% to 89% when tasks were decomposed with explicit success criteria before execution began. The AI's ability to navigate complexity is directly gated by the clarity of the instructions it receives.

The Core Principles of Atomic Tasks

An atomic task isn't just a small task. It's defined by three specific characteristics that make it AI-executable:

User table with id, email, and created_at columns" is atomic. "Write the POST /api/users endpoint to create a new user" is another atomic task.calculateTotal returns the correct sum when given a test array [1,2,3]." "The React component Button renders without errors in Storybook." "The CI pipeline passes for the auth-login branch."Without this verifiable criterion, the AI operates in the dark. It can't self-correct because it doesn't have a definition of "done."

Task Decomposition vs. Traditional Prompting

The shift from traditional prompting to task decomposition represents a fundamental change in how we interact with advanced AI models. It moves us from a conversational, instructional dynamic to a managerial, systems-oriented one.

| Aspect | Traditional Prompting | AI Task Decomposition |

|---|---|---|

| Unit of Work | A single, often complex, natural language prompt. | A sequence of atomic tasks with explicit criteria. |

| Feedback Loop | Implicit or manual. User must read output and give new instructions. | Explicit and automated. AI checks criteria and iterates autonomously. |

| Scope | Often ambiguous, leading to scope creep or incomplete work. | Pre-defined and bounded by the task list. |

| Best For | Simple Q&A, creative brainstorming, drafting text. | Complex, multi-step projects (coding, research, analysis, planning). |

| Failure Mode | The AI gets "lost" or produces coherent but incorrect or incomplete work. | A specific atomic task fails its check, pinpointing the exact problem. |

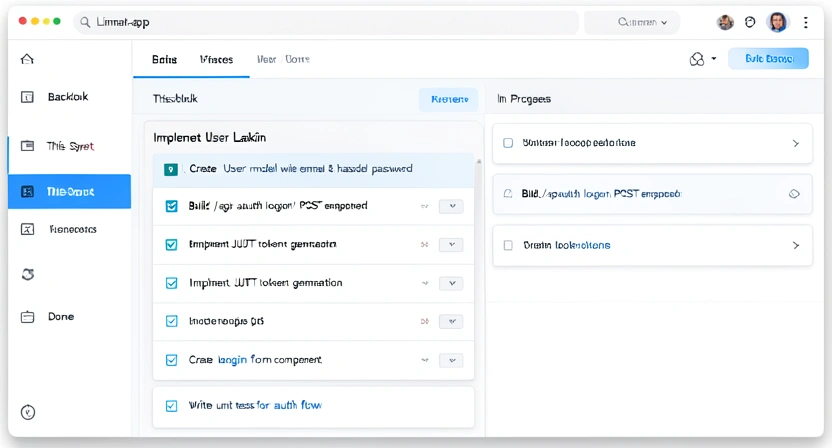

The Role of Claude Code's Autonomous Modes

The recent release of Claude Code's autonomous and multi-agent modes has turned task decomposition from a "best practice" into a "critical requirement." As discussed in our analysis of why Claude Code's autonomous mode makes old prompts obsolete, these features allow Claude to operate for extended periods, making decisions about what to work on next. Without a pre-defined map of atomic tasks, the AI has to invent its own plan on the fly, which is where the widely reported "stalling" occurs. It reaches a decision point with too many ambiguous options and no clear way to choose.

Decomposition provides the map. It tells the autonomous agent: "Here is the complete list of steps. Your job is to execute Step 1, verify it, then move to Step 2." This eliminates planning ambiguity and keeps the AI productively focused on execution. For truly complex workflows involving multiple specialists, understanding Claude Code multi-agent orchestration becomes key, where different atomic tasks can be assigned to different AI "roles" (e.g., a frontend specialist, a backend specialist, a tester).

Why Task Ambiguity Is the #1 Killer of AI Projects

Over 60% of failed AI-assisted projects stall due to ambiguous instructions, per The New Stack's 2026 survey -- not because Claude, GPT-4, or GitHub Copilot lack coding ability.

The excitement around AI coding assistants is palpable, but the gap between expectation and reality is often filled with frustration. The root cause, as identified by platforms like The New Stack in their 2026 AI Developer Survey, isn't a lack of AI intelligence—it's a failure of task specification. Developers report that over 60% of failed AI-assisted projects stall due to "ambiguous or overly broad initial instructions." Whether you use Anthropic's Claude Code, OpenAI's GPT-4-powered Cursor, or GitHub Copilot, the AI doesn't fail because it can't code; it fails because it doesn't know exactly what to code, or how to know if it's done.

This problem manifests in three specific, costly ways.

1. The Infinite Loop of Minor Corrections

You ask Claude to "refactor the authentication module to be more secure." It produces a new version. You notice it didn't add rate-limiting. You say, "Add rate-limiting." It updates the code but removes the password strength validator in the process. You say, "Don't remove the validator!" This back-and-forth can continue indefinitely because the original goal—"more secure"—was subjective and composite. The AI is trying to satisfy a moving target.

When decomposed, "refactor for security" becomes a list of atomic tasks:

* Task 1: Add rate-limiting logic to the login route (pass: a test fails after 5 requests in 1 minute).

* Task 2: Ensure password hashing uses bcrypt with cost factor 12 (pass: code inspection shows bcrypt.hash(password, 12)).

* Task 3: Add SQL injection protection to all user-input queries (pass: static analysis tool reports zero high-risk SQLi vulnerabilities).

Each task has a binary outcome. Claude executes Task 1, runs the test, and only moves on once it passes. There's no ambiguity and no regression, because each task's success is independently verified.

2. The "Looks Good But Is Broken" Problem

AI is exceptionally good at producing code that is syntactically correct and looks plausible. It can generate a full React component with buttons, state hooks, and API calls that will compile without errors. However, the API endpoint it's calling might not exist, the state update logic might have a race condition, or the error handling might be absent. Because you asked for a "user profile page," and it gave you a file that renders a profile-like UI, it considers the task done.

This is where verifiable pass/fail criteria are a game-changer. The atomic task for the profile page wouldn't be "create a profile page." It would be:

* "Create a UserProfile.jsx component that fetches data from GET /api/user/me and displays the user's name and email. Pass criterion: The component renders in Storybook using mock data, and a unit test confirms it calls the correct API endpoint."

The AI must now ensure the component is not just visually correct, but functionally integrated and testable.

3. Loss of Context and Progress in Long Sessions

In Claude Code's autonomous mode, a session can span thousands of actions. Without a decomposition framework, the AI has no persistent memory of the original project architecture or the specific decisions made hours ago. It can introduce conflicting patterns, duplicate functionality, or violate earlier established conventions. It's like a builder adding rooms to a house without the blueprint, eventually creating a labyrinth.

A predefined task list acts as the blueprint and the progress tracker. It provides persistent context. The AI knows that after completing "Task 14: Implement database migration for the orders table," the next step is always "Task 15: Create the OrderService class with a createOrder method." This linear (or directed acyclic graph) progression maintains architectural coherence. For maintaining this kind of complex, stateful workflow, a structured approach to structuring atomic skills for Claude Code's autonomous refactoring is essential.

The consequence of ignoring decomposition is wasted time, eroded trust in the tool, and abandoned projects. The promise of 10x productivity becomes a reality of 0.5x productivity, spent mostly on debugging and re-prompting. The industry data is clear: structure precedes scale. You cannot automate complexity without first defining what "done" looks like for every single piece. For a hands-on walkthrough of structuring your first project with these principles, see our guide on Claude Code's autonomous mode upgrade and your first real-world project. And if you want to apply decomposition to data infrastructure specifically, our tutorial on building a self-healing data pipeline with Claude Code demonstrates the full pattern.

How to Decompose Any Problem for an AI Agent

A six-step method -- from crystal-clear objectives to machine-readable YAML skill files -- turns any complex project into a verified task DAG that Claude Code executes autonomously.

The process of task decomposition is a skill in itself. It's part systems thinking, part software engineering, and part communication. The goal is to translate the messy reality of a complex goal into a clean, sequential, and verifiable workflow. Here is a step-by-step method you can apply to any project, whether it's coding, writing a report, conducting market research, or planning a campaign.

Step 1: Define the Ultimate Objective with Crystal Clarity

Before you can break something down, you need to know exactly what "it" is. Vague goals yield vague tasks. Start by writing a single, declarative sentence that defines the final, successful outcome. Avoid verbs like "improve," "explore," or "help with." Use verbs like "build," "create," "generate," "migrate," "prove," or "compare."

* Bad: "Make the website faster." * Good: "Reduce the Largest Contentful Paint (LCP) metric for the homepage from 4.2s to under 2.5s as measured by Google PageSpeed Insights." * Bad: "Analyze the sales data." * Good: "Generate a report identifying the top 3 customer segments by lifetime value and their common acquisition channels for Q1 2026."

This objective is your North Star. Every atomic task you create should be traceable back to achieving this specific outcome.

Step 2: Work Backwards from the Deliverable

Ask yourself: "What is the final artifact or state that signifies completion?" For a coding project, it might be a deployed application with a passing test suite. For a research project, it might be a formatted PDF document. List the tangible outputs.

Now, for each final deliverable, identify the immediate prerequisite. What is the very last thing that needs to happen before this is done? Then, what needs to happen before that? Continue working backwards until you reach the current state.

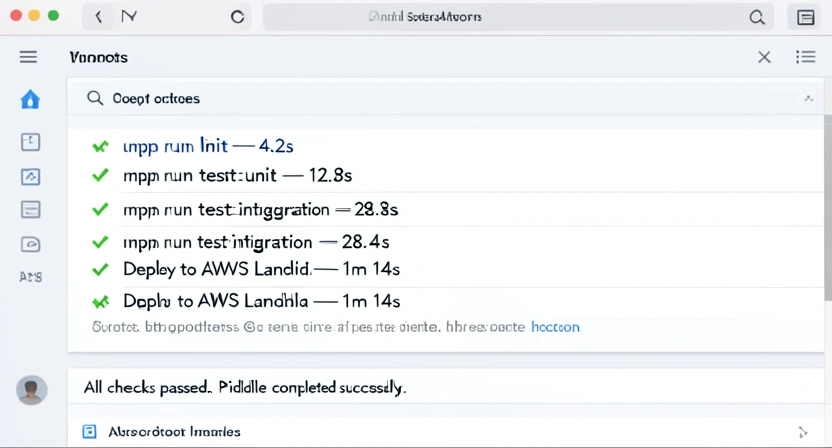

Example: Deploy a Node.js API to AWS Lambda. * Final State: API is live athttps://api.example.com.

* Prerequisite: AWS Lambda function is deployed with the latest code.

* Prerequisite: Code is packaged into a deployment-ready ZIP file.

* Prerequisite: All environment variables are configured in the deployment script.

* Prerequisite: The handler.js file is written and tested locally.

* Prerequisite: The CI/CD pipeline (e.g., GitHub Actions) is configured to run tests and deploy on push to main.

* ...and so on, back to "Initialize a new Node.js project."

This backward-chaining technique, often used in AI planning algorithms themselves, ensures you don't miss critical path dependencies.

Step 3: Apply the "Atomic" Test: Single Action, Verifiable Outcome

This is where you turn the prerequisite steps from Step 2 into formal atomic tasks. For each step, refine it until it meets the three criteria. A useful trick is to frame it as a command to a very literal-minded junior developer.

Take the prerequisite: "The handler.js file is written and tested locally."

This is still composite. Let's decompose it:

handler.js file with an exported function that handles an HTTP event and returns a JSON response. Pass Criterion: File exists and exports a function called handler. A simple syntax check (node -c handler.js) passes.handler function using Jest that mocks an event and checks the response structure. Pass Criterion: The test file exists and the test passes when run (npm test).Each of these tasks can be given to Claude Code independently. Each has a clear, automated way to check for success. This level of granularity is what enables true autonomy.

Step 4: Choose and Integrate Verification Tools

The pass/fail criterion is only as good as the tool that checks it. Part of your decomposition process is identifying the verification mechanism for each task type. This is where you leverage the existing ecosystem of developer tools.

* For Code Functionality: Unit test frameworks (Jest, Pytest), linters (ESLint, Pylint), static analysis (SonarQube, CodeQL), and type checkers (TypeScript tsc, MyPy).

* For API Endpoints: Integration test suites (Supertest, Postman Collections), contract testing (Pact), or simple cURL commands checking for a 200 status.

* For Data & Research: Data validation libraries (Pandas schema checks, Great Expectations), or logic checks in scripts (e.g., "assert report contains section 'Conclusions'").

* For Content & Writing: Grammar checkers, plagiarism detectors, or keyword density analyzers.

When crafting your atomic task, explicitly name the tool and the command. Instead of "verify the code works," write: "Pass criterion: All Jest tests in the auth/ directory pass (npm run test:auth)." This gives Claude an executable validation step. For a broader look at crafting instructions that work, our guide on AI prompts for developers dives deeper into this command-style communication.

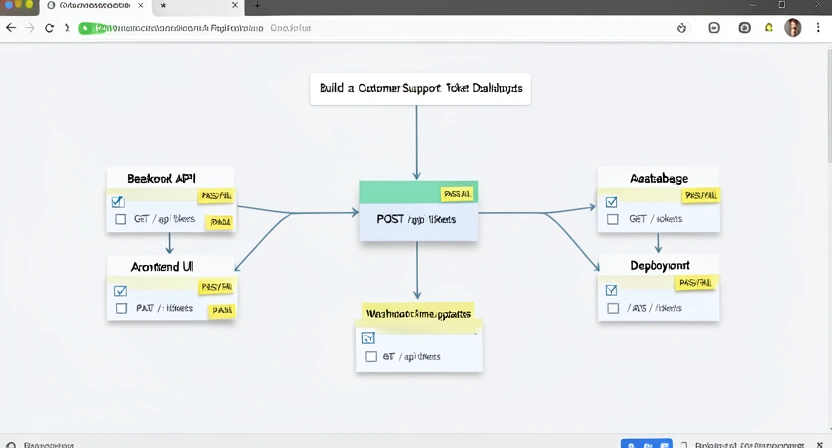

Step 5: Sequence the Tasks and Identify Parallelism

With a list of atomic tasks, you now have a workflow. Arrange them in a logical execution order based on dependencies. Some tasks will be sequential (you can't test the API before building it). Others can be parallelized (writing the frontend component and the backend endpoint can happen concurrently if the API contract is defined first).

This is where you can leverage Claude Code's multi-agent potential. Sequential tasks form a single agent's timeline. Independent parallel tasks can be assigned to different, specialized agents working simultaneously. For instance, while one agent works on "Task 8: Design the database schema," another can start on "Task 9: Create the API specification OpenAPI document," as long as both reference a shared "Task 7: Define core data models."

You can visualize this as a simple directed acyclic graph (DAG). Tools like the Ralph Loop Skills Generator essentially automate the creation of these verified task DAGs, which you can then feed directly into Claude Code's autonomous orchestration. You can explore a library of such pre-built workflows in our hub for Claude skills.

Step 6: Package and Execute

Your final output is a structured task list. This can be a JSON file, a YAML configuration, or a simple markdown checklist. The key is that it's machine-readable enough for the AI to parse. A common format is:

project: "LCP Optimization"

objective: "Reduce homepage LCP to <2.5s"

tasks:

- id: 1

description: "Audit current assets with Lighthouse CI"

command: "npx lighthouse-ci https://example.com --view"

pass_criterion: "Lighthouse report is generated and shows LCP value."

- id: 2

description: "Identify and compress images > 200KB"

command: "find ./public -name '*.{jpg,png}' -size +200k -exec convert {} -resize 1200x -quality 80 {} \;"

pass_criterion: "No image in ./public is larger than 200KB."

- id: 3

description: "Implement lazy loading for below-the-fold images"

command: "Update Next.js config to use next/image component with loading='lazy'"

pass_criterion: "Page uses next/image and Lighthouse audit 'Defer offscreen images' passes."You then provide this task list, along with the project context and codebase, to Anthropic's Claude Code (or adapt it for Cursor, GitHub Copilot, or any GPT-4-based agent). Your instruction becomes: "Execute the following project plan. Begin with Task 1. After completing each task, verify the pass criterion before proceeding to the next task ID. If a task fails, attempt to debug and fix it before moving on."

You have now moved from being a prompt writer to a project manager. The AI handles the execution, and you've provided the perfect plan.

Proven Strategies for Effective AI Task Management

Test-first decomposition, tiered validation gates, rollback design, and AI-assisted plan refinement are the four advanced tactics that separate 10x Claude Code results from average outcomes.

Decomposition is the foundation, but mastery comes from strategy. How you structure, validate, and recover from failures within your atomic task framework determines whether your AI collaboration is merely functional or truly transformative. These advanced tactics move beyond the basic checklist.

Strategy 1: The "Test-First" Decomposition Mandate

The most powerful shift you can make is to define the verification before the implementation task. This is the AI equivalent of Test-Driven Development (TDD).

Don't create a task that says: "Write a function to calculate user engagement score." Instead, create two sequential tasks:

test_engagement.py with a test test_calculate_engagement_score. The test should define mock user data and assert the expected score. Pass criterion: The test file exists and runs, but fails because the function doesn't exist yet."calculate_engagement_score function in engagement.py. Pass criterion: The test created in Task A now passes."This forces absolute clarity about the desired behavior before a single line of implementation is written. It gives Claude a precise, binary target to hit. The failing test from Task A is not a bug; it's the specification.

Strategy 2: Implement Tiered Validation Gates

Not all verification is equal. Some checks are fast and cheap (syntax, linting). Others are slow and expensive (full integration tests, performance benchmarks). Structure your task flow to use fast "gates" before allowing slower processes to run.

Structure your workflow in phases: * Phase 1 - Commit Gate: Atomic tasks for code generation must pass a syntax and lint check. This catches obvious errors instantly. * Phase 2 - Unit Gate: After a module is written, its dedicated unit tests must pass. This validates logic in isolation. * Phase 3 - Integration Gate: After related modules are complete, run integration tests that check how they work together. * Phase 4 - Acceptance Gate: Finally, run end-to-end tests or performance benchmarks that validate the overall objective.

This prevents the AI from wasting hours of autonomous time only to fail on a basic syntax error introduced at the beginning. You can model this by making the pass criterion of an early task be the successful execution of the next phase's gate. For complex validations, looking at prompts designed for multi-step validation can provide useful patterns.

Strategy 3: Design for Rollback and Isolated Failure

A key principle in robust systems is that a failure in one component should not catastrophically break the entire system. Apply this to your task design.

* Isolate Side Effects: Tasks that modify production databases, delete files, or deploy code should be explicitly marked and, if possible, designed to be reversible. Prefer tasks that generate new files (create_migration_20250303.sql) over tasks that modify core files in-place.

* Create Save Points: After a major phase (e.g., "Database schema updated"), include an atomic task that creates a backup or a commit snapshot. Pass criterion: "Git commit created with message 'Pre-feature-X schema state'."

* Define Cleanup Tasks: For every task that creates a temporary resource (e.g., "Spin up a test S3 bucket"), there should be a corresponding, linked cleanup task scheduled for later execution.

This turns the AI workflow from a fragile one-shot sequence into a resilient process that can survive and recover from mid-execution failures, which are inevitable in complex work.

Strategy 4: Use the AI to Refine Its Own Plan

Decomposition isn't a one-way street. You can use Claude not just to execute tasks, but to improve the task list itself. This is a meta-strategy for handling novel or poorly understood problems.

This creates a virtuous cycle where the planning process itself becomes more reliable and efficient over time.

Got Questions About AI Task Decomposition? We've Got Answers

Four answers on decomposition timing, handling stuck tasks, non-coding applications, and the most common beginner mistake with Claude Code, GPT-4, and Cursor atomic workflows.

How long does it take to decompose a complex problem?

It depends entirely on the problem's complexity and your familiarity with the domain. For a well-understood task like "add a new form to an existing web app," a skilled practitioner might decompose it into 10-15 atomic tasks in 10-15 minutes. For a novel, large-scale project like "build a recommendation engine from scratch," the initial decomposition could take an hour or more, involving research and dependency mapping. The critical insight is that this time is an investment that pays back multifold in reduced debugging, elimination of rework, and successful autonomous AI execution. It shifts time from the middle of the project (chaotic fixing) to the beginning (structured planning).

What if the AI gets stuck on a single atomic task?

This is a feature, not a bug. When an AI gets stuck on a well-defined atomic task, it precisely isolates the problem. Your intervention is now highly targeted. Instead of sifting through hundreds of lines of code to find the issue, you know the failure is in "Task 7: Write the validateEmail function to reject invalid domains." You can look at the specific code for that function, the test that's failing, and provide focused guidance. Often, you can simply instruct Claude to "debug the failing test for Task 7" and it will have the context to self-correct. The task boundary acts as a perfect container for the problem.

Can I use task decomposition for non-coding work?

Absolutely. The methodology is universal. Writing a market analysis report? Decompose it: 1. Gather raw data from sources A, B, C (pass: data files are saved). 2. Clean and normalize the datasets (pass: script runs without errors, output passes schema check). 3. Perform statistical analysis X and Y (pass: analysis script generates charts 1-3). 4. Draft report sections (pass: document contains headings I, II, III). 5. Synthesize findings into executive summary (pass: summary is under 300 words and highlights key points). Any process with defined inputs, a transformation, and a desired output can be decomposed. It brings the same reliability to research, content creation, data analysis, and business process automation.

What's the biggest mistake people make when starting with decomposition?

The most common mistake is making the atomic tasks too large or leaving the pass/fail criteria vague. People often stop at a level like "Build the user authentication module," which is still a multi-day project for an AI. They pair it with a criterion like "Users can log in," which is subjective and hard for the AI to self-verify. The antidote is the "junior developer test" and the "automated test mandate." If you can't imagine a literal-minded junior developer successfully executing the task based solely on the description and then running a simple script/command to prove it worked, the task is not atomic enough. Break it down further until it passes that test.

Ready to turn complex projects into reliably automated workflows?

The Ralph Loop Skills Generator automates decomposition into atomic tasks with pass/fail criteria that Anthropic's Claude Code, OpenAI's GPT-4, and GitHub Copilot can execute end-to-end.

Ralph Loop Skills Generator helps you apply the power of AI task decomposition instantly. Instead of manually breaking down every problem, generate a structured skill that defines the atomic tasks, clear pass/fail criteria, and the execution order Claude Code needs to succeed autonomously. Stop wrestling with ambiguous prompts and start shipping complex work with AI. Generate your first skill for free and see the difference a structured workflow makes.<!-- sister-projects-start -->

Other Doved Studio projects

Related tools from the same studio you might find useful:

- Glean: Turn scrolling time into a daily action plan. Capture, process, execute.

- Popout: Create your portfolio in minutes with a single shareable page.

- Larpable: Spot fake founders, guru grifts, and performance entrepreneurship.

- Doved Studio: Studio indie derrière cette app et une dizaine d'autres outils.

ralph

Building tools for better AI outputs. Ralphable helps you generate structured skills that make Claude iterate until every task passes.