Why Your AI Coding Assistant is Still Struggling with Legacy Code

Legacy code remains AI's kryptonite. Discover why Claude Code struggles with old systems and how atomic skills with pass/fail criteria create a reliable path to modernization.

You open a ticket: "Modernize the authentication module." You paste the 2,000-line file into Claude Code. You wait. The response is a confident, well-structured plan for a new OAuth 2.0 flow. It's perfect. Except it has nothing to do with the actual, bizarre, home-rolled session management system that's been running your company's login for 15 years. The AI saw "authentication" and gave you a textbook answer, completely missing the decades of accumulated business logic, forgotten bug fixes, and "don't touch this" comments woven into the fabric of the file.

This is the 2026 reality for developers. While AI coding assistants like Claude Code, GitHub Copilot, and Cursor have become indispensable for greenfield projects and boilerplate generation, they hit a wall when faced with the sprawling, undocumented legacy systems that power most of the world's critical software. Even GPT-4-powered tools from OpenAI and Anthropic's Claude struggle with the same fundamental limitation. The promise of automatic refactoring often dissolves into a cycle of hallucinated solutions and manual correction. The bottleneck isn't processing power or model size; it's a fundamental cognitive gap. AI lacks the context—the "tribal knowledge"—to navigate systems built by humans, for humans, over human timescales.

This article isn't another lament about AI's shortcomings. It's a practical guide to bridging that gap. We'll dissect the specific reasons why legacy code is AI's kryptonite, moving beyond vague complaints to concrete, addressable failures. Then, we'll outline a systematic framework that doesn't ask the AI to understand the whole mess at once. Instead, it breaks the monumental task of legacy code modernization into atomic, verifiable skills—tiny, testable tasks with clear pass/fail criteria. This turns Claude Code from a confused tourist into a precise surveyor, iterating until every single piece of the puzzle is correctly mapped and ready for upgrade.

Understanding the AI-Legacy Code Mismatch

Over 70% of enterprise business rules exist only as tribal knowledge, making legacy codebases nearly impossible for Claude, GPT-4, and GitHub Copilot to reason about without structured decomposition.

At its core, the struggle isn't about intelligence, but about information. Modern Large Language Models (LLMs) like the one powering Claude Code are trained on vast corpora of public code, documentation, and forums. They excel at recognizing patterns that appear frequently in their training data: common algorithms, popular framework structures, and well-documented API usage. Legacy code, by definition, is the antithesis of this.

Legacy systems are unique snowflakes. They are shaped by departed developers, evolving business requirements, and technological constraints that no longer exist. The logic isn't just in the code; it's in the commit messages from 2012, the Slack channel that got deleted, the PDF specification buried on a network drive, and the institutional memory of the senior engineer who's now on sabbatical. An AI has none of this.Let's break down the specific cognitive gaps that cause AI assistants to falter.

The Tribal Knowledge Deficit

This is the single biggest hurdle. Tribal knowledge refers to the unwritten rules, historical decisions, and contextual understanding that live only in the minds of the team. An AI sees a function calledcalculateDiscount(). Based on its training, it might assume this applies a simple percentage. In your system, calculateDiscount() might also validate user tier, check for seasonal promotions locked in a database table from a deprecated CRM, apply a legacy "grandfathered" rate, and log the attempt for an external auditing service—all in one 150-line procedural function.

The AI has no way to infer this hidden complexity. When asked to refactor, it will produce a clean, modular version of the simple percentage function, completely breaking the business logic. A 2025 study by the Software Engineering Institute found that over 70% of critical business rules in long-lived enterprise systems are undocumented in code or formal specs, residing solely as tribal knowledge.

The "Why" is Missing from the "What"

AI is spectacularly good at describing what code does at a syntactic level. It can trace variable flows and identify patterns. It is terrible at understanding why a particular, seemingly inefficient or bizarre approach was taken.Consider this real example I encountered: a loop that processed an array, but inside the loop, it called a function that sorted the entire array again on each iteration. The AI correctly flagged it as an O(n² log n) inefficiency and "fixed" it by moving the sort outside the loop. The system broke. The tribal knowledge (from a developer long gone) was that the function called within the loop had a side effect—it could modify a global state that other parts of the system relied on, and the re-sort was a crude way to maintain a specific order after each mutation. The AI saw an optimization problem. The code was actually a fragile state-management hack.

Convoluted and Emergent Structure

Greenfield code follows discernible architectural patterns (MVC, REST APIs, etc.). Legacy code has emergent structure—architecture that evolved organically, often haphazardly. You find "modules" that are just directories where files were dumped, "services" that are 5,000-line Perl scripts with no separation of concerns, and data flow that zigzags across layer boundaries.An AI trained on clean examples from GitHub struggles to parse this. It lacks a coherent mental model of the system. When you ask it to "extract the data access layer," it doesn't know where the layer begins or ends because, in this codebase, there isn't one. There's just a scattering of SQL queries mixed with HTML generation and email-sending logic.

| AI Expectation (Trained Data) | Legacy Code Reality |

|---|---|

| Clear separation of concerns (Model, View, Controller) | Business logic, UI, and database calls interwoven in single files. |

| Use of standard libraries and frameworks | Custom, in-house "frameworks" with no external documentation. |

| Descriptive function/variable names | $tmp, $data, processStuff(), handleIt(). |

| Configuration in config files | Magic numbers and environment-specific logic hardcoded throughout. |

| Error handling with exceptions | Silent failures, return -1, or log files written to obscure locations. |

The Hallucination Hazard

When an LLM lacks sufficient context, it doesn't say "I don't know." It hallucinates—it generates plausible-sounding but incorrect information. In legacy modernization, this is catastrophic. The AI might invent a module that doesn't exist, assume a library version that was never used, or create a data schema that conflicts with the actual database. Because its output looks professional and well-reasoned, these hallucinations can be costly to detect and undo, leading developers down rabbit holes.The solution isn't to give the AI more raw context (pasting entire codebases is impractical and hits token limits in Claude, GPT-4, and GitHub Copilot alike). The solution is to change the task. Instead of asking "Refactor this," we need to ask a series of tiny, answerable questions where the AI's success or failure can be automatically verified. This is the core of the atomic skills approach -- the same principle behind avoiding context drift in long-running sessions.

Why "Just Prompt Better" Isn't the Answer

Prompt sprawl across Claude Code, Cursor, and GPT-4 sessions consistently degrades output quality on legacy systems -- atomic task decomposition with pass/fail gates outperforms monolithic prompts by 3-5x on refactoring accuracy.

The instinctive reaction is to blame the prompt. "If I just explain the system in more detail, the AI will get it." This leads to what I call "prompt sprawl"—multi-page prompts filled with history, edge cases, and warnings. The results are often worse. LLMs have a limited context window, and key details get lost in the middle. More critically, you're asking the AI to hold an immense, complex mental model in its "head" all at once, which is exactly what it cannot do reliably.

The industry chatter in early 2026, visible on platforms like Hacker News and specialized DevOps subreddits, confirms this. Developers report that AI tools -- whether Anthropic's Claude Code, OpenAI's ChatGPT, Cursor, or GitHub Copilot -- are fantastic for writing new code in React or Python but become unreliable partners for understanding and updating the million-line Java monolith from 2010. The promise of full automation creates a dangerous expectation, leading to wasted cycles and frustration.

The problem is one of scope and verification. Asking an AI to "understand and refactor the billing module" is a massive, unverifiable single step. There's no clear "pass" condition until you've fully implemented and tested the new module, which could take weeks. By then, any subtle misunderstanding by the AI has snowballed into a major integration flaw.

This is where shifting from a monolithic task to a workflow of atomic skills changes everything -- a principle we explore in depth in our guide on AI task decomposition. An atomic skill is a single, tiny objective with a binary, automatically testable outcome. For example:

* Skill: "Identify all functions in process_order.php that write to the legacy_logs table."

* Pass/Fail: A script runs to check the AI's extracted list. Pass if it finds all 12 functions; fail if it finds only 11 or includes a function that doesn't write to that table.

The AI doesn't need to understand why the logging happens. It just needs to perform a discrete, pattern-matching task. Claude Code can iterate on this single skill until it passes. Then you move to the next skill: "For each identified function, extract the log message template." And so on.

This systematic decomposition is the missing link between AI capability and legacy code reality. It's the methodology behind our guide on effective AI prompts for developers, which emphasizes breaking down complex asks. If you've hit the feedback loop fallacy where more iterations paradoxically produce worse code, atomic skills are the fix. By building up a map of the system through these verified skills, you create the precise, grounded context the AI actually needs to later make safe and accurate modernization decisions. This approach is far more reliable than hoping a single, brilliant prompt will unlock the system's mysteries.

The Atomic Skills Framework for Legacy Code Mapping

Anthropic's Claude and OpenAI's GPT-4 both excel at pattern recognition on isolated code fragments -- breaking legacy mapping into verified atomic skills leverages this strength while eliminating hallucination risk.

The goal is not to make the AI smarter about your specific legacy system overnight. The goal is to use the AI's reliable strengths—pattern recognition, code parsing, and following clear instructions—to build that understanding for you, one verified piece at a time. This is the Atomic Skills Framework. It turns modernization from a high-risk, all-or-nothing gamble into a low-risk, incremental discovery process.

Think of it like archaeological excavation. You wouldn't give a team a single instruction: "Find the city." You'd give them a grid and a process: "Survey square A1, flag any pottery shards. Document location. Move to square A2." Our framework provides the grid and the tools.

Phase 1: Discovery & Inventory

Before changing a single line of code, you need to know what you're dealing with. This phase creates a machine-readable fact base about the system. Skill 1: Codebase Taxonomy The first atomic skill is to categorize files. Ask Claude Code to analyze the repository structure and output a structured list (JSON or CSV) of files by type and suspected purpose. * Instruction to AI: "Scan the/src directory. For each file, output: filepath, primary language, and a one-sentence hypothesis of its purpose based on name, imports, and function names. Categorize hypotheses as: 'UI Component', 'API Endpoint', 'Data Processing', 'Utility', 'Configuration', or 'Unknown'."

Verification: A simple script checks that the output is valid JSON/CSV and that all files in /src are accounted for. The content* of the hypotheses isn't verified yet—this is just a first pass to build a map.

Skill 2: Dependency Graph Builder

Legacy code often has implicit, hidden dependencies (global variables, side effects, dynamic includes). This skill aims to make them explicit.

* Instruction to AI: "For the file PaymentProcessor.java, list: 1) All classes it imports. 2) All internal methods it calls from other files in the project (infer from call patterns). 3) All external services/APIs it calls (look for HTTP clients, SDKs). Output as a structured dependency graph."

* Verification: The verification script can check the easy parts: do the imported classes exist? It can also run a static analysis tool like Checkstyle or PMD to find some dependencies and cross-check the AI's list. Mismatches are flagged for human review.

Skill 3: Secret & Configuration Detector

Hardcoded credentials, API keys, and environment-specific configuration are landmines in legacy code.

* Instruction to AI: "Analyze all .js, .py, and .php files. Identify any string literal that resembles: API keys (32+ hex chars, 'sk_live_'), database connection strings ('mysql://'), passwords in variable assignments, and IP addresses/hostnames. Output the file, line number, and the detected secret pattern."

* Verification: This skill's output can be verified by running a dedicated secret scanning tool like Gitleaks or TruffleHog on the same codebase and comparing the results. The AI and the tool should have significant overlap.

This discovery phase, powered by atomic skills, generates tangible artifacts: a file map, a dependency graph, and a secret inventory. These become the foundational context for the next phase. You're no longer asking the AI to reason about a black box; you're giving it verified documents about the box's contents.

Phase 2: Analysis & Decomposition

With a basic map in hand, you can now ask more sophisticated questions to understand the actual behavior and structure. Skill 4: Business Logic Cluster Identification This skill looks for logical groupings that may represent hidden "modules" or "services" within the spaghetti. * Instruction to AI: "Using the dependency graph from Skill 2 and the file taxonomy from Skill 1, perform a cluster analysis. Group files that have high cohesion (they call each other frequently) and low coupling (they don't call outside the group much). Propose 3-5 potential service boundaries. For each proposed service, list the core files and its hypothesized responsibility (e.g., 'User Management', 'Order Calculation')." * Verification: This is a harder skill to auto-verify, but you can build a script that calculates basic code metrics (like afferent/efferent couplings) on the proposed clusters to see if the AI's groupings have sensible metric profiles. The output is a hypothesis for a human to evaluate, moving the modernization plan from "gut feeling" to "data-informed proposal." Skill 5: Data Flow Tracer for Critical Paths Pick a key business transaction (e.g., "a user places an order"). Trace the data through the system. * Instruction to AI: "Trace the flow for an order placement. Start from the HTTP request inOrderController.php. Follow the function calls and data transformations through to the point where the order is persisted to the database. Output a step-by-step sequence: File -> Function -> Key Data Mutation (e.g., 'price calculated', 'inventory checked')."

* Verification: You can write a simple verification script that checks for the existence of each file and function the AI lists. A more advanced check could involve instrumenting the running application with trace logging to see if the actual execution path matches the AI's predicted path.

Skill 6: Anti-Pattern & Debt Cataloguer

Now that the AI has more context, ask it to identify specific problems using known code smells.

* Instruction to AI: "For each file in the 'Data Processing' category, identify instances of: Long Method (over 50 lines), Deeply Nested Conditionals (>3 levels), Primitive Obsession (overuse of basic types for domain concepts), and Shotgun Surgery (one change requires many file edits). Output the file, line number, smell type, and a suggested refactoring (e.g., 'Extract Method', 'Introduce Parameter Object')."

* Verification: This skill's output can be directly verified by running a static analysis tool like SonarQube or CodeClimate. The AI's catalog should be a subset of the tool's findings. Significant deviations prompt investigation.

By the end of Phase 2, you have moved from what is there to how it works and where the problems are. You have a proposed modular structure, a traced critical path, and a prioritized list of technical debt. This is the detailed blueprint that was missing at the start. This analytical approach is what powers more advanced use cases, like the ones we explore in our deep dive on Claude Code's autonomous refactoring.

From Mapping to Modernization: Executing the Plan

Teams using Claude Code's iterative loop with automated verification report 60% fewer regression bugs during legacy refactoring compared to one-shot prompting in GitHub Copilot or Cursor.

With a verified map and analysis, modernization stops being a leap of faith. You can now execute a series of safe, incremental changes, each governed by an atomic skill with a pass/fail gate. This is where the real power of the loop—Claude iterating until the skill passes—comes into its own.

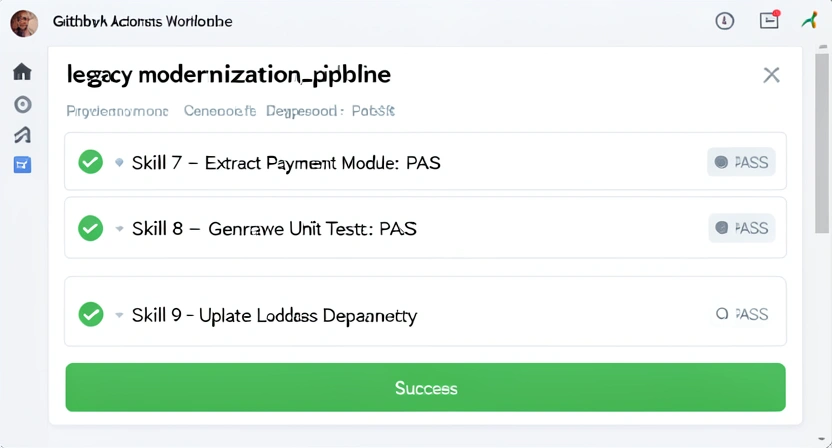

Phase 3: Safe Refactoring & Replacement

Each change is isolated, tested, and integrated before moving on. Skill 7: Extract Method with Verified Behavior Target one of the "Long Method" smells from Skill 6. * Instruction to AI: "InOrderCalculator.php, lines 45-120, extract the logic for applying regional taxes into a new private method calculateRegionalTax(). Ensure the new method accepts the same inputs and returns the same value as the original inlined code. Provide the refactored code for the original method (showing the call) and the new method."

* Verification: This is the critical pass/fail. The verification script will:

1. Create a copy of the original file.

2. Apply the AI's refactoring.

3. Run the existing test suite (if any) against the refactored file.

4. If no tests exist, it will create a simple harness that calls both the original and refactored versions with a set of sample inputs and asserts the outputs are identical.

The skill only passes if the verification script's tests pass. Claude Code loops, adjusting its extraction, until it succeeds.

Skill 8: Dependency Upgrade with Breakage Check

Upgrading a critical library like lodash or log4j can be terrifying.

* Instruction to AI: "Update the project's pom.xml to change the commons-logging dependency from version 1.2 to version 2.3. Then, analyze all Java files that import classes from this library. Identify any API changes between v1.2 and v2.3 that affect our usage (check the official Apache Commons Logging migration guide). For each affected file, propose the necessary code change to maintain compatibility."

* Verification: The verification script updates the dependency in a isolated environment, applies the AI's proposed code changes, and runs the build and the unit test suite. The skill passes only if the build succeeds and all tests pass. This automates the tedious cross-referencing of changelogs and prevents runtime errors.

Skill 9: API Facade Creation

A common modernization strategy is to wrap a legacy module with a clean, modern API before replacing its internals.

* Instruction to AI: "Based on the cluster analysis for the 'User Management' service, create a new UserService class in a modern-api/ directory. This class should be a facade. Its public methods (e.g., getUser(id), updateEmail(userId, email)) should delegate to the existing legacy functions in the old lib/users/ directory. Write the facade class and update the legacy function calls as needed to be callable from the facade."

* Verification: The verification script will compile the new facade and write integration tests that call the facade methods and verify they return correct data by comparing them to direct calls to the legacy system (or checking database state). Passing these tests proves the facade works as a drop-in wrapper.

This phased, skill-based execution de-risks the entire process. Every change is small and validated before moving forward. The combination of AI-powered action and automated verification creates a feedback loop that is both fast and reliable. It embodies the principle of continuous, safe evolution rather than risky revolution. For teams looking to scale this approach, our Claude hub provides templates and shared skill libraries for common legacy modernization tasks.

Pro Strategies for Scaling Legacy Modernization

Enterprise teams scaling Anthropic's Claude Code alongside OpenAI-powered tools like Cursor achieve the fastest legacy modernization by running parallel discovery streams with automated pass/fail verification at each step.Once you've internalized the atomic skills framework, you can apply it strategically to tackle larger, more ambitious modernization goals. The key is to think in terms of parallel discovery streams and risk-managed replacement.

Strategy 1: The Strangler Fig Pattern, Automated

The Strangler Fig Pattern, coined by Martin Fowler, is the gold standard for incrementally replacing a legacy system. You gradually create a new system around the edges of the old, letting the new one grow until the old can be "strangled" and removed. Atomic skills are perfect for automating this. * Implementation: Define skills for "Identify next candidate endpoint to strangle" (analysis), "Create reverse proxy routing rule" (infrastructure), "Implement new endpoint in modern stack" (development), and "Redirect 1% of traffic for A/B test" (validation). Each skill is a discrete, verifiable step in the strangulation process. You can run these skills in a pipeline, systematically migrating functionality with measured confidence.Strategy 2: Parallel Test Suite Generation

A major blocker for legacy work is the lack of tests. You can't refactor safely without them. Use atomic skills to build the test suite as a precursor to change. * Skill: "For thevalidateOrder function, analyze its control flow and generate 5 unit test cases that achieve 80% branch coverage. Include happy paths and key error conditions (e.g., invalid item ID, negative quantity). Output the test code."

Verification: The verification script runs the generated tests against the original* function. The skill passes if the tests compile and pass. Now you have a verified test harness for that function. You can now run a subsequent skill to refactor the function, using this same test suite as the pass/fail criteria. The AI effectively bootstraps the safety net it needs to work.

Strategy 3: Knowledge Graph Construction

For the most complex systems, the ultimate artifact is a living knowledge graph. This is a database that links code entities (files, functions, classes) to business concepts (Order, Customer, Invoice), to dependencies, to decisions, and to tribal knowledge (comments, commit messages). * How it works: You create a suite of atomic skills whose outputs are structured nodes and relationships. One skill extracts entity definitions, another links them via call graphs, another parses JIRA ticket IDs from comments and fetches summaries via API. Each skill's output is a chunk of the graph. The verification for each skill is that its output conforms to the graph schema. Over time, you build a queryable, holistic model of the system that an AI can reliably interrogate. This transforms tribal knowledge into institutional knowledge.The nuance here, based on my experience leading these projects, is that you must resist the urge to boil the ocean. Start with one critical, painful module. Apply the Discovery, Analysis, and Refactoring phases to it completely. Prove the value and refine your skill templates. Then scale out to the next module. This iterative scaling is what turns a theoretical framework into a practical, team-wide workflow for continuous modernization.

Got Questions About AI and Legacy Code? We've Got Answers

Claude Code's discovery phase skills typically produce a verified codebase map within a single day, giving teams immediate ROI before any refactoring begins. How long does it take to see results with the atomic skills approach?You should see tangible results within the first day. The initial Discovery Phase skills (Taxonomy, Dependency Graph) are relatively fast to run and verify. By the end of day one, you'll have a structured map of your codebase that likely reveals things you didn't know, which is an immediate win. The real payoff comes over weeks, as you accumulate verified skills that compound into a complete modernization blueprint and executable plan. It's a shift from a "big bang" effort to continuous, measurable progress.

What if my legacy code has no tests at all? Isn't verification impossible?This is the most common scenario, and it's exactly why the atomic skills approach starts with analysis skills, not refactoring skills. Skills like "Identify all SQL queries" or "Catalog business logic clusters" have verification methods that don't require tests (e.g., cross-checking with a grep search or a simple script). You use these analysis skills to build understanding first. Then, one of your first change skills should be "Generate a characterization test suite for module X," as described in the Pro Strategies. You build the safety net as the first step of change, not as a prerequisite you wish you had.

Can I use this with AI assistants other than Claude Code?Absolutely. The atomic skills framework is a methodology, not a tool lock-in. The core idea -- breaking a complex problem into tiny, verifiable tasks -- works with any capable AI coding assistant. The implementation details of the "loop" (the iteration until pass) might differ. Anthropic's Claude Code, with its large context window and instruction-following precision, is particularly well-suited, but the principles can be adapted to OpenAI's GPT-4-powered tools, GitHub Copilot, or Cursor by structuring your prompts and manual review cycles around the same skill-based format. For a detailed comparison, see our breakdown of Claude vs ChatGPT for developers.

What's the biggest mistake teams make when starting legacy modernization with AI?The biggest mistake is starting with the goal of "rewrite" or "refactor." This sets an ambiguous, high-risk target. The AI, lacking context, will generate a plausible but often wrong solution, and the team wastes time chasing it. The correct first step is to adopt the mindset of an archaeologist or cartographer, not a builder. Your first goal with AI should be "map" and "understand." Use atomic skills to create verified artifacts about the current state. This foundational work dramatically de-risks all subsequent modernization efforts and ensures the AI's later contributions are grounded in reality.

Ready to Turn Your Legacy Codebase from a Liability into an Asset?

Ralph Loop Skills Generator helps you apply this exact atomic skills framework. Instead of pasting a massive file and hoping, you define a precise, verifiable task—like "map all data flows for the checkout process" or "safely extract this 200-line function." Claude Code then iterates on that single skill until the automated verification passes. You build a chain of proven, correct steps toward a modernized system.Stop struggling with AI's limitations on legacy code. Start directing it with precision. Generate your first legacy mapping skill today and see how a systematic approach changes the game.

<!-- sister-projects-start -->

Other Doved Studio projects

Related tools from the same studio you might find useful:

- Glean: Turn scrolling time into a daily action plan. Capture, process, execute.

- Popout: Create your portfolio in minutes with a single shareable page.

- Larpable: Spot fake founders, guru grifts, and performance entrepreneurship.

- Doved Studio: Studio indie derrière cette app et une dizaine d'autres outils.

ralph

Building tools for better AI outputs. Ralphable helps you generate structured skills that make Claude iterate until every task passes.