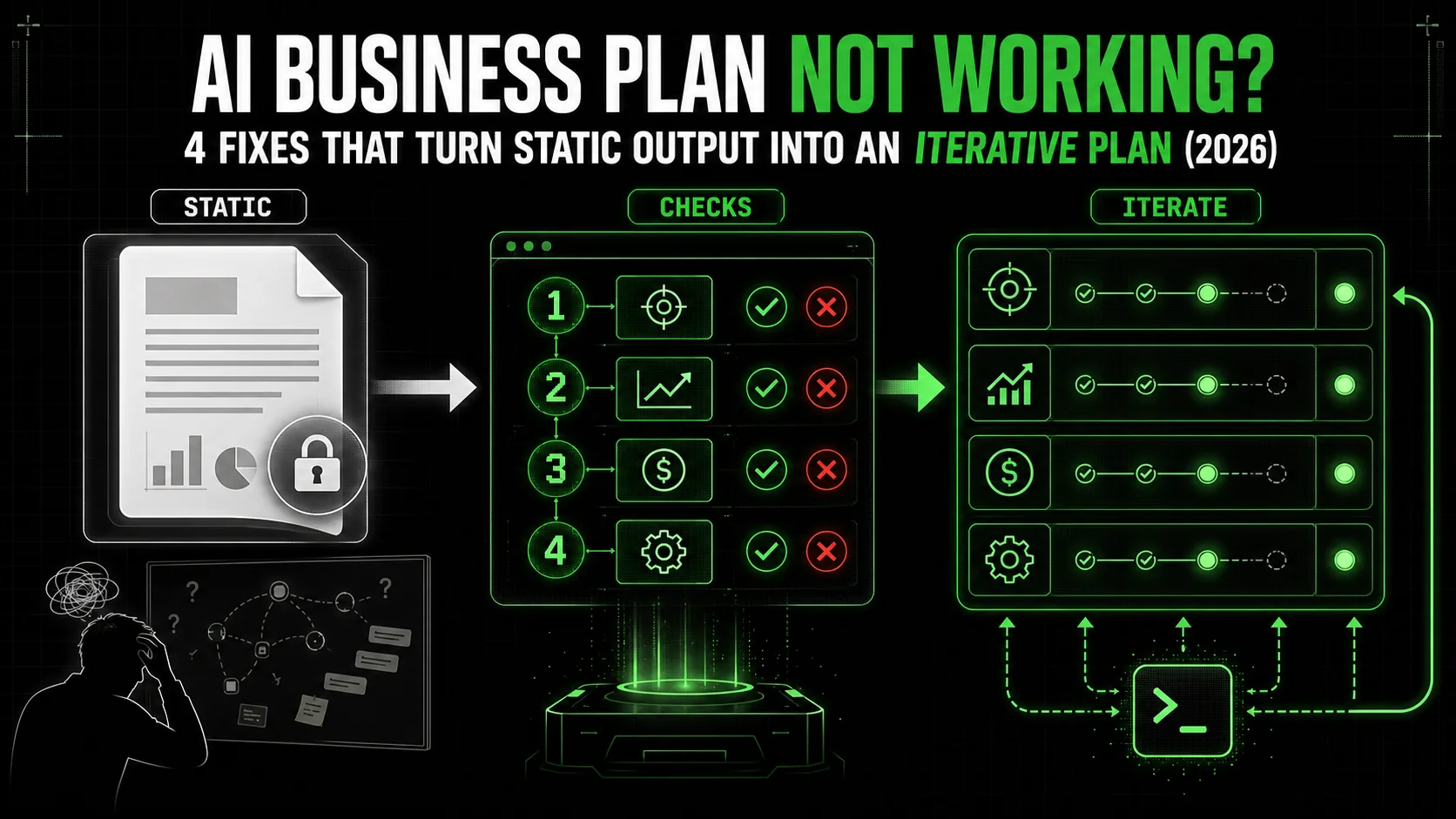

AI Business Plan Not Working? 4 Fixes That Turn Static Output Into an Iterative Plan (2026)

Your AI-generated business plan reads well but does nothing. 4 fixes, each with pass/fail checks, to convert static AI output into an iterative plan Claude can actually execute. Solopreneur examples throughout.

You just spent two hours with Claude, ChatGPT, or Gemini. You have a 30-page business plan. It’s formatted perfectly. The executive summary is crisp. The market analysis section cites recent trends. The financial projections have neat tables. It looks like something you’d present to an investor.

You feel a brief surge of accomplishment. Then, the dread sets in. You stare at the document. Now what? Which part do you actually do first? How do you know if your "validated customer problem" is actually validated? What does "launch MVP" mean as a Monday morning task?

This is the AI strategy-execution gap. It’s the growing chasm between the beautiful, static documents AI can produce and the messy, iterative, testable reality of building something that works. You’re not alone. Across forums like Indie Hackers and LinkedIn, a common thread has emerged in early 2026: solopreneurs and developers are drowning in AI-generated plans that go nowhere. The document is a destination, not a map.

The problem isn’t the AI. It’s the process, as we explored in our analysis of why your AI perfect marketing strategy is failing to convert. We’re using AI to create finished artifacts when we should be using it to build dynamic systems of thought. A traditional business plan is a snapshot of assumptions. A real plan is a living algorithm of tasks, tests, and adaptations. This article will show you why your current approach is failing and give you a concrete method to fix it. We’ll move from generating documents to generating executable skills.

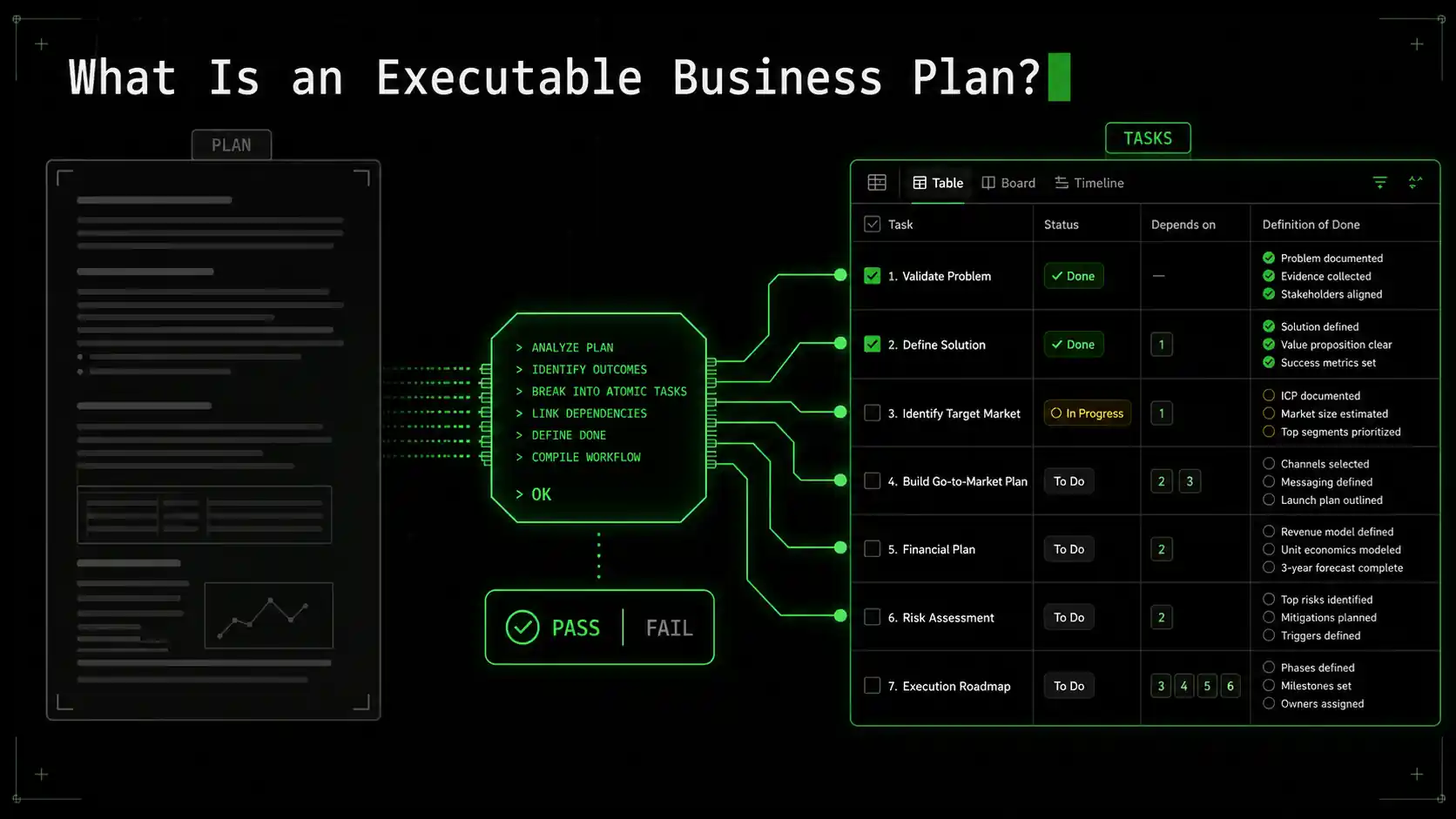

What Is an Executable Business Plan?

An executable plan replaces 30-page Claude or GPT-4 documents with atomic tasks carrying binary pass/fail criteria -- Startup Genome data shows this structured validation approach raises 3-year survival rates by 30%.

An executable business plan isn't a document. It's a workflow. It takes the high-level concepts from a traditional plan—value proposition, customer segments, revenue streams—and breaks them down into the smallest possible units of work that can be tested, validated, or invalidated with a clear yes/no outcome.

Think of it as the difference between a architect's beautiful rendering and the builder's daily work order. The rendering is inspiring, but the work order tells the crew exactly what to do today, what materials they need, and how to know if the job is done right. An executable plan is a series of work orders for your business.

The core shift is from writing descriptions to defining criteria. Instead of a paragraph describing your target market, you have a task: "Identify and validate primary customer pain point." The success criteria aren't vague. They're atomic: "Task passes when: 1. Five interviews are completed with people matching our ideal customer profile. 2. At least 80% independently mention the specific problem we hypothesize. 3. Interview transcripts are synthesized into a one-page problem statement."

This approach is heavily influenced by the scientific method and agile development practices. You start with a hypothesis (our target customer has problem X), design an experiment (customer interviews), define what success looks like (specific feedback patterns), and then execute. The result is either a pass (hypothesis confirmed, proceed) or a fail (hypothesis invalidated, pivot).

| Traditional AI Business Plan | Executable Business Plan | |

|---|---|---|

| Output | Static document (PDF, Doc) | Dynamic workflow of atomic tasks |

| Success Metric | Completeness, polish, professionalism | Pass/Fail criteria for each task |

| Nature | Descriptive, assumptive | Experimental, testable |

| When It's "Done" | When the document is finished | When all critical-path tasks pass |

| Adaptability | Low; requires full rewrite | High; tasks can be re-ordered, added, or removed based on results |

| Primary Use | Persuasion (investors, partners) | Execution (your own action) |

The methodology behind building these executable plans is what we call skill generation. It's the process of deconstructing any complex goal—"start a business," "launch an app," "grow to 1000 users"—into a linear or branching series of these atomic, testable tasks. It forces clarity and exposes assumptions early. If you can't break a step down into something with a clear pass/fail condition, you haven't thought it through enough to execute it.

This is where most AI advice falls short. Prompts like "write a business plan for a SaaS app" produce the descriptive document. What we need are prompts and systems that help us build the experimental workflow. It's a different way of thinking, and it requires different tools. For a deeper dive into crafting prompts that drive action, not just output, our guide on effective AI prompts for solopreneurs explores this mindset shift in detail.

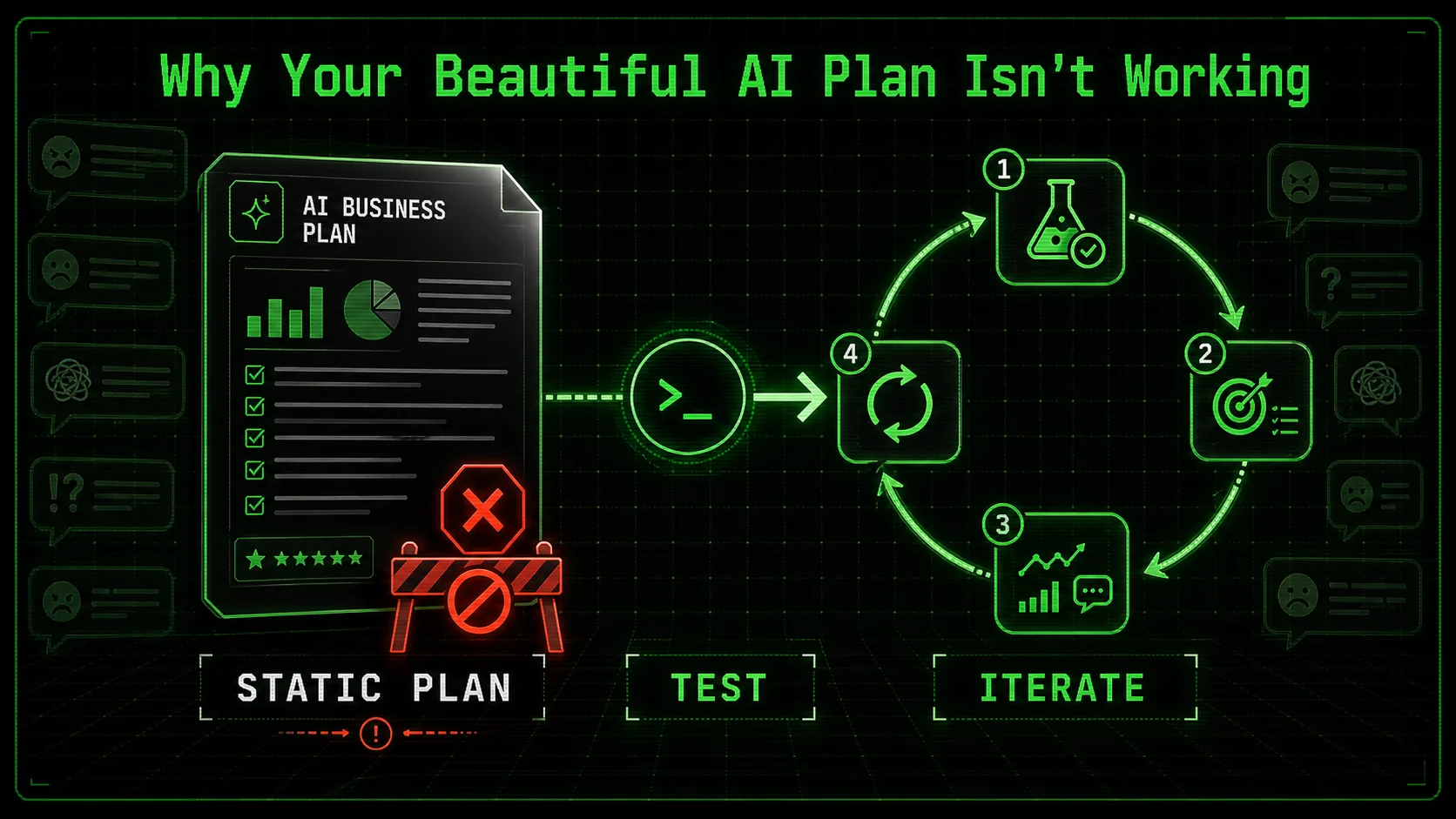

Why Your Beautiful AI Plan Isn't Working

Static Claude, GPT-4, and Cursor outputs create an illusion of completeness -- a polished document built on untested assumptions that Anthropic's own research shows users rarely decompose into actionable steps without structured prompting.

The feeling of being stuck with a perfect plan is so common it's becoming a meme in builder circles. The issue isn't a lack of effort or intelligence. It's a structural problem with how we're using generative AI. These models are trained on mountains of existing business literature, templates, and successful company narratives. When you ask for a plan, they give you a convincing average of all those sources. It's a recombination of known patterns, not a blueprint for your unique, uncertain journey.

Let's break down the specific reasons this approach fails.

The Illusion of Completeness

A 30-page document, complete with sections like "SWOT Analysis" and "Five-Year P&L Projection," creates a powerful psychological effect: the illusion of completeness. Your brain checks the box. "Business plan: done." This is dangerous because the hardest work—the learning—hasn't even started. The plan is built on a foundation of untested assumptions you likely pasted into the prompt. The AI doesn't know if your target market is real, if your pricing is feasible, or if your solution is desirable. It just knows how a document discussing those things should be written.This illusion paralyzes action. When faced with a monolithic, finished-looking document, the next step feels monumental. Do you start on page 1? Page 10? The document doesn't tell you. It presents everything as equally important and simultaneously true. In reality, startup progress is massively uneven. You might spend three weeks validating a single core assumption and only an afternoon writing copy once it's validated. The AI plan obscures this reality.

Lack of Clear, Atomic Next Actions

Open your AI-generated plan. Find the "Marketing Strategy" section. It probably says something like "Leverage content marketing and SEO to drive organic traffic, complemented by targeted social media advertising." Sounds reasonable. Now, as a solo founder on a Monday morning with three hours to work, what do you do? "Leverage content marketing" is not an action. It's a category.This is the classic failure of abstraction, one that applies equally to Claude, GPT-4, GitHub Copilot, and Cursor. The AI operates at the strategic abstraction level because that's what most business writing does. Your job as an executor is to drill down from strategy to tactic to specific action. The AI leaves you at the strategy level, dangling. An executable plan skips the fluffy strategy statement and goes straight to: "Task 1: Write a 1200-word beginner's guide targeting the keyword '[your core problem] tutorial.' Success: Article published, passes Grammarly check, includes one relevant internal link."

Without these atomic next actions, you default to what feels urgent or familiar, not what's strategically validated. You tweak your logo instead of talking to customers.

No Built-In Feedback Loops

A static document is a one-way street. You write it, you read it. There's no mechanism for the plan to learn from reality. In contrast, building a business is a constant dialogue with the market. You try something, the market responds, you adapt.A proper executable plan is built around these feedback loops. Every task that involves an external interaction—a customer interview, a landing page test, a pricing experiment—has a "result" step. That result, whether pass or fail, dictates the next task. This creates a dynamic, adaptive system. Maybe your interview task fails (customers don't have the problem you thought). The next task in your flow isn't "proceed to build MVP," it's "pivot to explore problem Y mentioned in interviews."

Your AI-generated document has no such circuitry. It's a linear narrative that assumes you were right from the beginning. When reality inevitably proves you wrong on some point, the entire document becomes obsolete, and you're back to square one with a feeling of failure. A 2025 report from the Startup Genome project confirmed this, noting that startups that adopt structured validation processes early have a 30% higher survival rate after three years. They're not smarter; they have systems that force them to learn and adapt quickly.

The "Strategy-Execution Gap" is Now an AI Problem

Management consultants have talked about the strategy-execution gap for decades: the disconnect between a company's plans and its ability to carry them out. Generative AI has democratized this problem. Now, a single person can generate a strategy document in minutes that would have taken a consulting team weeks, but the execution gap remains—and can feel even wider because the strategy looks so authoritative.The gap exists because strategy and execution require different kinds of thinking. Strategy is convergent thinking: synthesizing information into a coherent story. Execution is divergent thinking: exploding a story into a thousand concrete details and decisions. LLMs are brilliant at the former and terrible at the latter without very specific guidance. They can write the story of a successful launch party but can't tell you that you need to call the venue, finalize the menu, and send the invites by specific dates.

To bridge this gap, you need to force the AI to do the divergent, executional thinking. This is the core of moving beyond generic documents. It's about using AI as a thinking partner to decompose goals, not just describe them. Exploring a hub of focused AI prompts can provide the starting templates for this kind of interactive, decomposition-focused dialogue.

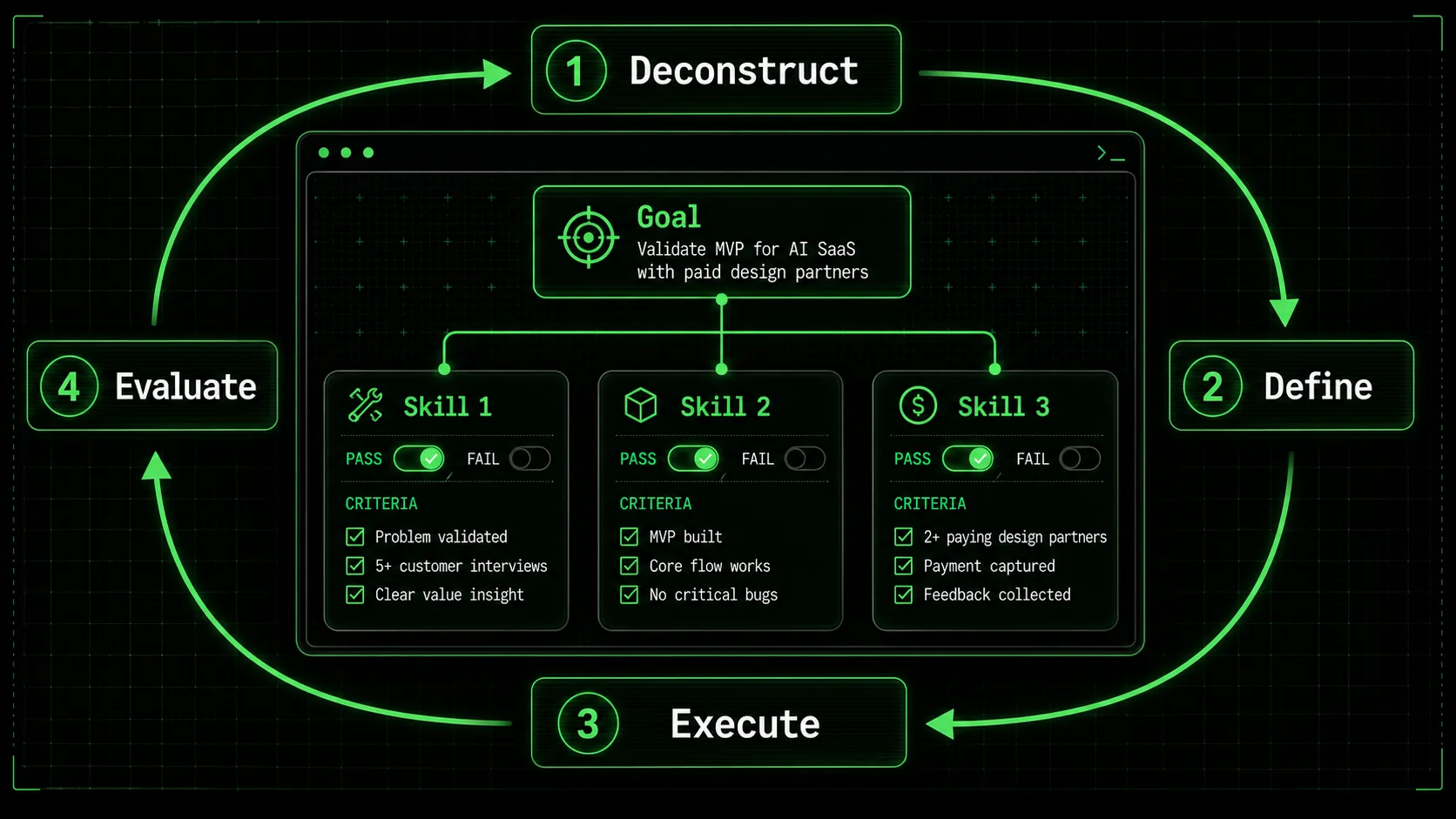

How to Build an Executable, Iterative Business Plan

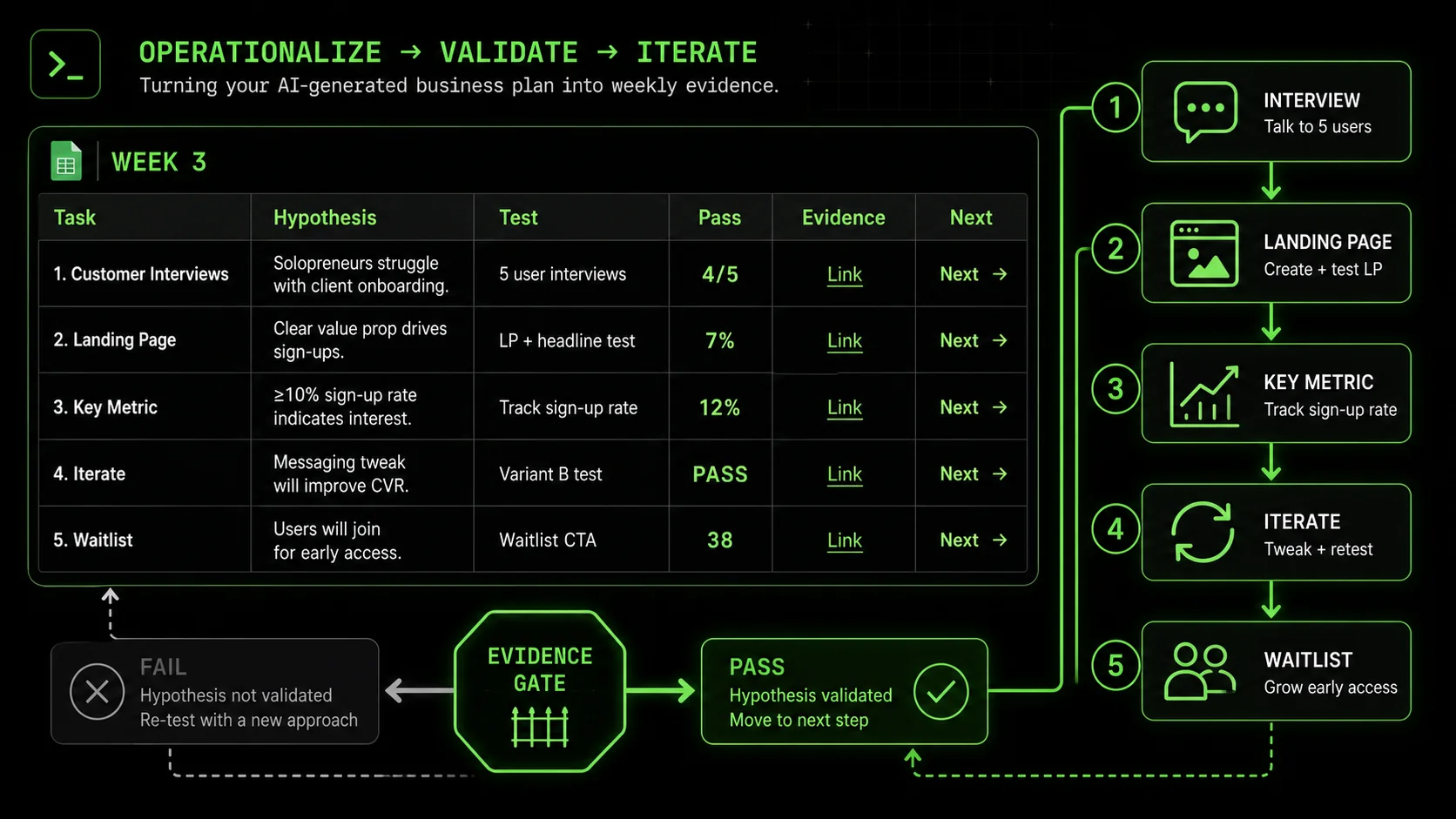

Use a four-step cycle -- Deconstruct, Define, Execute, Evaluate -- to convert Claude or GPT-4 strategy outputs into weekly sprints of atomic hypothesis tests with machine-checkable pass/fail gates.

Forget the 30-page document. Start with a single, clear goal. It could be "Get first paying customer," "Launch MVP to 100 beta users," or "Validate that problem X is worth solving." This goal is your destination. Now, we're going to build the map, not by drawing the whole route at once, but by defining the very next leg of the journey and how we'll know we're on the right path.

This method uses a cycle: Deconstruct, Define, Execute, Evaluate. It's iterative by design. You won't plan the whole business upfront. You'll plan the next learning loop.

Step 1: Deconstruct Your Grand Goal into Atomic Hypotheses

Your big goal is made of assumptions. Write them down. For "Get first paying customer," assumptions might include: * People have Problem X and are actively looking for a solution. * They are willing to pay money for a solution. * Your specific solution approach (the core feature) appeals to them. * You can reach these people through Channel Y. * Your proposed Price Z is within their acceptable range.Each of these is a hypothesis that needs testing. The first step of your executable plan is to convert the most critical, riskiest hypothesis into a testable task. Usually, this is the problem hypothesis. If people don't have the problem, nothing else matters.

How to do it with AI: Don't ask for a plan. Ask for help deconstructing.Prompt: "Act as a startup coach. My goal is to get my first paying customer for [your idea]. List the 5 riskiest assumptions I'm making that must be true for this to happen. For each assumption, suggest one concrete, small task I could do to test it with a clear pass/fail condition."

This prompt forces divergent thinking. The AI will move from strategy ("you need a marketing plan") to specific tests ("interview 5 people who match your target profile and ask them about their process for dealing with [problem]").

Step 2: Define Tasks with Unambiguous Pass/Fail Criteria

This is the heart of the method. A task is only useful if you know exactly when it's done and successful. Vague tasks create ambiguity and let you off the hook. Bad Task: "Talk to some potential customers." Good Task: "Conduct 5 problem validation interviews." * Pass Criteria: 1. 5 interviews scheduled and completed with individuals who match our initial Ideal Customer Profile (ICP) criteria. 2. Interviews are recorded (with permission) and key quotes transcribed. 3. At least 4 out of 5 interviewees spontaneously mention the core problem we hypothesize, without leading them. * Fail Criteria: The task fails if we cannot find 5 qualified people to interview, or if fewer than 4 mention the problem.Notice the specificity. "5 interviews." "Spontaneously mention." This removes opinion from the result. You can't finish the task and say "well, I feel like they had the problem." The data says yes or no.

Tool Tip: Use a simple tool to track this. A spreadsheet works. A table in a doc works. The columns you need are: Task, Status (Todo/In Progress/Pass/Fail), Pass Criteria, Notes/Links. More advanced tools like Trello, Asana, or Notion databases are perfect for this. For complex project breakdowns, Notion's databases are incredibly flexible for creating these custom workflows.Step 3: Execute and Document Relentlessly

Now you do the task. But execution isn't just about checking the box. It's about gathering evidence. For the interview task, the evidence is the recordings, transcripts, and your summary notes. Store links to these directly in your task tracker (in the Notes/Links column).This documentation serves two purposes:

During execution, your job is to follow the script of the task as defined, but also to observe. Did something unexpected happen? Did interviewees keep mentioning a related problem you hadn't considered? Note it down. This is the "Evaluate" part feeding back into "Deconstruct."

Step 4: Evaluate and Generate the Next Skill

Once the task is complete, you enforce the pass/fail criteria. No fudging. If only 3 out of 5 mentioned the problem, the task fails. This is not a personal failure. It's a successful test! You just saved yourself months building something nobody wants. A failed test is valuable information.Now, the system adapts. * If PASS: Your hypothesis gained support. The next task logically follows from this success. Example: "Task 2: Draft a one-page problem statement using direct quotes from interviews. Pass: Statement is under 300 words, uses customer language, and is reviewed by 2 peers for clarity." If FAIL: You need a pivot. The next task is to explore the new information. Example: "Task 2 (Pivot): Analyze interview transcripts for the most commonly mentioned actual* problems. Synthesize top 3. Pass: A ranked list of 3 alternative problem hypotheses with supporting quotes."

This is where the power of a loop comes in. The outcome of one task determines the next. Your plan is no longer static; it's a decision tree. You're not following a pre-written script; you're navigating based on evidence.

Automating the Loop: This is where a tool like the Ralph Loop Skills Generator changes the game. Instead of manually deciding the next task, you can define these pathways in advance or use AI in the moment to generate the appropriate next skill based on the pass/fail outcome. It turns your plan from a document into an interactive, adaptive workflow. The generator helps you move from planning to doing, and you can see how this applies beyond just business planning to any complex task.Building Your Full Plan: A Sample Workflow

Let's stitch a few steps together for a hypothetical "Micro-SaaS for freelance designers."This workflow is rigorous, adaptive, and data-driven. Each step has a clear gate. You only move forward when you have evidence, not just hope.

Proven Strategies to Operationalize Your Plan

Weekly sprints, pre-mortems, a validation dashboard, and "good enough" criteria -- four strategies that turn Claude, GPT-4, or GitHub Copilot planning outputs into a continuous learning loop rather than a static artifact.

Knowing the method is one thing. Making it a habitual, low-friction part of your workflow is another. These strategies will help you embed executable planning into how you work.

Strategy 1: Work in Weekly Sprints, Not Grand Plans

Don't try to map the next six months. It's a waste of time because you'll learn too much that will change your direction. Instead, work in one-week sprints.Every Friday afternoon or Monday morning, review your last skill's result. Then, define the one next skill you will complete that week. That's your entire plan for the week: achieve a pass on that single atomic task. This focuses your energy dramatically. You're not juggling 10 things; you're nailing one critical thing.

This sprint approach aligns perfectly with the concept of a Weekly Learning Goal. Instead of a to-do list, your goal is: "By Friday, I will have learned X by completing test Y with result Z." The output is learning, not just activity.

Strategy 2: Use the "Pre-Mortem" for Critical Skills

For tasks that feel high-risk or high-cost (like building a core feature), run a pre-mortem before you start. Ask yourself and your AI co-pilot: "Imagine it's 6 months from now and this task/feature completely failed. What are the most likely reasons?"List out 3-5 reasons. Common ones include "We built it but users didn't understand it," "The performance was too slow," "It didn't integrate with their existing workflow." Then, for each potential failure reason, ask: "What smaller, cheaper task could we do now to test if this risk is real?"

Often, this pre-mortem will reveal that you should replace one big, risky task with 2-3 smaller, safer validation tasks. It's a forcing function for decomposition.

Strategy 3: Create a "Validation Dashboard"

Your evidence—interview clips, survey results, analytics screenshots, user test recordings—should live in one place. Create a "Validation Dashboard" in a tool like Notion, Coda, or even a simple Google Doc.Structure it by hypothesis. Under the hypothesis "Freelancers will pay $29/mo for this," have subsections for: Task 1:* Price sensitivity survey (Link to results) Task 2:* Concierge MVP test (Link to payment records & feedback) Task 3:* A/B test on pricing page (Link to analytics screenshot)

This dashboard becomes your single source of truth. When doubt creeps in (and it will), you can open it and see the cumulative weight of evidence for or against your decisions. It's also invaluable if you ever bring on a co-founder, seek funding, or need to explain your reasoning to someone else. For grounding your data in real-world SEO and traffic insights, regularly consulting a tool like Google Analytics is non-negotiable for measuring what happens after you launch.

Strategy 4: Embrace "Good Enough" Criteria

Perfectionism is the enemy of execution. When defining pass/fail criteria, be rigorous but reasonable. "100% of users must love it" is a bad criterion. "70% of test users can complete the core job-to-be-done without help" is a good one.The goal of each skill is to reduce uncertainty, not to achieve perfection. A pass means "there's enough signal here to justify investing in the next step," not "this is flawless." This "good enough" mindset allows you to maintain velocity and keep learning loops tight. You can always circle back and improve something later with a new, focused skill (e.g., "Skill 27: Improve onboarding flow completion rate from 70% to 85%").

Got Questions About Executable AI Planning? We've Got Answers

Answers to the four most common questions about task sizing, handling failures, tool choice across Claude/GPT-4/Cursor, and the biggest pass/fail criteria mistake that stalls solopreneurs.

How long should each atomic task take? Aim for tasks you can complete in a few hours to a few days. If a task stretches beyond a week, it's probably not atomic enough and should be broken down. The sweet spot is 2-4 hours of focused work, plus any waiting time for external responses (like waiting for survey results). The point is to have a frequent rhythm of testing and learning, not long marathons of building in the dark. What if I keep failing tasks? Doesn't that mean my idea is bad? Not at all. In the early stages, failing tasks is the primary way you learn. If you fail three tasks in a row trying to validate the same core problem, that's strong evidence you need to pivot the problem space. That's a huge win—you've just avoided building the wrong thing. The system is working. The failure is in the idea, not in you. A bad idea would be one where you ignore the fails and plow ahead anyway. Can I use this method with any AI tool? Yes, the methodology is tool-agnostic. You can apply it using ChatGPT, Claude, Gemini, or a pen and paper. However, general-purpose chatbots require more discipline in your prompting to force them into the decomposition mindset. Tools specifically built for skill generation, like Ralph Loop, bake this methodology into the interface, guiding you to define criteria and manage the pass/fail loop. They reduce the friction of maintaining the system. What's the biggest mistake people make when switching to this approach? The biggest mistake is defining pass/fail criteria that are still too vague or subjective. "Make the landing page look good" is vague. "Achieve a 15-second or lower average time-on-page for first-time visitors from organic search" is specific and measurable. The second biggest mistake is not enforcing the criteria. It's tempting to declare a pass when you're emotionally attached to an outcome, even if the data says fail. You must trust the system. The criteria are your impartial judge.Ready to turn your next big idea into a series of small, certain wins?

If your business plan involves investor pitches, our companion article on why your AI business plan is failing the investor meeting covers the presentation-side gap. For Anthropic's Claude-specific strategic planning pitfalls, see our guide on AI assistant strategic planning vs guessing.

Ralph Loop Skills Generator helps you deconstruct any complex goal into atomic, testable tasks with clear pass/fail criteria. Stop staring at perfect plans and start executing adaptive workflows. Generate your first skill and close the AI strategy-execution gap today. Generate Your First Skill<!-- sister-projects-start -->

Other Doved Studio projects

Related tools from the same studio you might find useful:

- Glean: Turn scrolling time into a daily action plan. Capture, process, execute.

- Popout: Create your portfolio in minutes with a single shareable page.

- Larpable: Spot fake founders, guru grifts, and performance entrepreneurship.

- Doved Studio: Studio indie derrière cette app et une dizaine d'autres outils.

ralph

Building tools for better AI outputs. Ralphable helps you generate structured skills that make Claude iterate until every task passes.