Why Your AI's 'Perfect' Business Plan is Failing the First Investor Meeting

AI can draft a business plan, but can it make it investor-ready? Discover why generic AI output fails and how atomic validation skills create plans that survive real-world scrutiny.

You just spent three hours with Claude, and the result is a 40-page business plan that looks like it was designed by McKinsey. The TAM is a billion dollars, the five-year projections are hockey-stick perfect, and the competitive analysis matrix is color-coded to perfection. You send it to a potential investor, a partner at a mid-tier VC who asked for a "first look." The reply comes back in 48 hours: "Thanks for sharing. The deck is polished, but it feels generic. The assumptions aren't grounded. Let's reconnect when you have more validation."

Sound familiar? You're not alone. As of March 2026, firms like Sequoia and Andreessen Horowitz report a 40% spike in pitches featuring what they call "AI plan fatigue"—documents that are structurally flawless but substantively hollow. The problem isn't that AI is bad at writing. The problem is we're asking it to write a story instead of building a case. A story can be convincing on paper; a case must withstand interrogation.

This article isn't about prompting better. It's about engineering validation directly into the creation process. We'll dissect why the standard "write me a business plan" prompt fails at the first meeting, and how a shift to atomic skills with pass/fail criteria forces Claude to do the hard work of assumption-testing and reality-checking before you ever hit send. The goal isn't a prettier document. It's a defensible one.

What Makes an AI-Generated Business Plan "Generic"?

Claude, GPT-4, and GitHub Copilot produce structurally perfect plans but lack validated claims -- Sequoia and a16z report a 40% spike in "AI plan fatigue" from documents with round TAMs, feature-grid competitor analysis, and no sensitivity modeling.

![Screenshot of a generic AI-generated business plan template in a word processor, showing placeholder text like "[Insert Total Addressable Market Here]" and generic bar charts with labels "Market Growth" and "Revenue Projection."](GENERATE_IMAGE: A screenshot of a Google Docs or Microsoft Word document showing a classic AI-generated business plan template. Visible sections include "Executive Summary," "Market Analysis" with a placeholder TAM of "$1.2B," and a generic SWOT analysis table with cliché entries like "Strong Team" and "First-Mover Advantage.")

When an investor calls a plan "generic," they're not criticizing the font. They're identifying a fundamental lack of specific, validated claims that connect your unique solution to a real market problem. An AI, trained on millions of public documents, excels at producing the form of a business plan—the standard sections, the expected jargon, the conventional structure. It's assembling a convincing-looking artifact from patterns, not from proof.

Think of it like a movie set facade. From the front, it looks like a bustling saloon or a cozy bookstore. But there are no rooms behind the doors, no plumbing, no foundation. An investor's job is to walk around back and check. Generic AI output is all facade.

Let's break down the specific hallmarks of a generic, AI-assembled plan:

* The TAM is a Round, Impressive Number: It's always "a $1.2B market opportunity" or "tapping into the $50B wellness industry." The source is usually a single market research report (like those from Statista) cited without critical dissection. There's no discussion of the Serviceable Obtainable Market (SOM)—the slice you can realistically capture in 18 months. Competitive Analysis is a Feature Grid: It lists 5 competitors and compares features (Price, UI, Integration, etc.) with checkmarks. It misses the nuanced analysis of why* those competitors win customers, their true weaknesses (often operational, not product-based), and the unserved "job to be done" that they all ignore. * Financials are Linear and Optimistic: Revenue grows 30% month-over-month, magically. Customer Acquisition Cost (CAC) starts high and plummets due to "virality" never fully explained. The model has no sensitivity analysis. What if your key supplier raises prices by 15%? What if your top-of-funnel conversion rate is half what you project? The AI doesn't ask these questions unless you force it to. * The "Risks" Section is a CYA Formality: It lists universal risks like "competition," "regulation," and "economic downturn" with generic mitigation strategies ("We will monitor the competitive landscape"). It avoids the scary, specific risks that keep you up at night—like your lead engineer leaving, a key patent being denied, or a core API you depend on changing its terms.

The core issue is one of input and task design. When you prompt "Write a business plan for a SaaS tool that helps remote teams collaborate," you're giving the AI a creative writing assignment. Its success criterion is "produce a coherent, professional-looking document that matches the pattern of other business plans." It has succeeded the moment it spits out the PDF.

What you needed was an engineering assignment: "Build a validated case for why this specific SaaS tool will capture a specific segment of the remote collaboration market within 24 months, and identify the three most critical assumptions that could break that case." The success criteria are now about truth-seeking, not document completion.

| Aspect | Generic AI Plan (The Facade) | Validated, Investor-Ready Plan (The Building) |

|---|---|---|

| Market Size | Cites a large, round TAM from one source. | Breaks down TAM to SAM to SOM. Uses 2-3 sources, notes contradictions. |

| Competition | Feature comparison grid. | Analysis of business models, customer lock-in, and unmet jobs-to-be-done. |

| Financial Model | Linear, optimistic projections. | Driver-based model with built-in sensitivity analysis (3 scenarios). |

| Risks | Generic industry risks (competition, regulation). | Specific, acute risks to this business with mitigation ownership assigned. |

| Success Criteria | Document is complete and looks professional. | Core business hypotheses are tested and supported with evidence. |

The Anatomy of a Validated Claim

A claim in a business plan is not a statement. It's a hypothesis bundled with its supporting evidence and its testing methodology. A generic plan says, "We will acquire customers through content marketing at a CAC of $50." A validated plan says, "Hypothesis: We can acquire SMB customers via LinkedIn content marketing at a CAC < $50. Evidence: We ran three pilot campaigns targeting [specific job title] in [specific industry]. Average CPC was $2.10, landing page conversion was 4.2%, leading to a projected CAC of $48. Validation Threshold: We need to scale the campaign 5x with <20% CAC degradation to confirm channel viability."

The second version is harder to write. It requires the AI to not just state the goal, but to structure the thinking behind it, demand evidence, and define what "passing" looks like. This is where moving beyond simple prompting to structured skill generation changes the game. It's the difference between asking for a speech and asking for a cross-examination.

Why "Polished & Generic" Is Now a Death Sentence

Investors now flag flawless Anthropic Claude or OpenAI GPT-4 output as a red flag -- plans that lack critical thinking, a defensible "why now," and implementation evidence get rejected regardless of formatting quality.

The backlash is here. For the past two years, founders have enjoyed a massive leverage boost from LLMs. You could go from idea to a stunning pitch deck in an afternoon. The initial reaction from investors was surprise, then appreciation for the increased clarity. But as the volume of these polished plans skyrocketed, the signal-to-noise ratio collapsed. What was once a sign of competence is now a potential red flag.

A partner at a seed-stage fund told me last month, "When I see a plan that's too perfect—the formatting impeccable, every section filled, the grammar flawless—my first instinct is to skim for substance. I'm looking for the one messy, handwritten part. The screenshot of a real customer email. The link to a crude prototype. The admission of a failed experiment. That's where the real business is."

The failure at the first meeting isn't a rejection of your idea. It's a rejection of the process (or lack thereof) that the plan represents. An investor is buying into your team's ability to navigate uncertainty, test hypotheses, and adapt. A generic plan suggests you skipped that core muscle-building work in favor of a fast, pretty answer.

Let's look at the three specific problems that doom a generic AI plan in 2026.

Problem 1: It Demonstrates a Lack of Critical Thinking

Investors fund people who think deeply about their business. A plan that reads like a Wikipedia summary of an industry shows you haven't engaged in the messy, contradictory work of finding truth. When every claim is surface-level and cites the first Google result, it signals you might build a product the same way—by implementing generic best practices instead of discovering unique insights.

For example, an AI can easily write: "The project management software market is crowded, but our differentiator is a focus on user experience." An investor's immediate thought is, "Every single one of your competitors claims a focus on user experience. What does that actually mean?" A plan built with validation skills would force a deeper dive: "Hypothesis: Mid-level project managers at marketing agencies are frustrated by the cognitive overhead of existing tools. Evidence: We interviewed 12 such managers. 9 cited 'constant context switching' as their top pain point. Differentiation: Our UI consolidates the briefing, asset review, and timeline adjustment workflows into a single, contextual pane, reducing reported task-switching by an average of 60% in user tests."

The latter requires the AI to structure an investigative process—define a target, gather evidence, and synthesize a specific solution. It moves from stating a common belief to proving a unique insight. This is the kind of work that turns a plan from a description into an argument. For solopreneurs especially, who wear every hat, using AI to systemize this critical thinking is a force multiplier. Frameworks from our guide on AI prompts for solopreneurs can help structure these investigative dialogues with Claude.

Problem 2: It Has No Defensible "Why Now?"

This is the killer question. Markets exist for years. Why will your company succeed right now? A generic plan will list "technological advancements" and "market trends." A validated plan identifies a specific, recent convergence of factors.

Perhaps a key supplier's API became public and affordable last year (a technical enabler). Maybe a new regulation (like GDPR or CCPA) created a compliance headache that is a wedge for your product (a regulatory enabler). Or a shift in consumer behavior post-pandemic opened a new channel (a behavioral enabler).

An AI won't connect these dots unless you task it with scanning recent news, analyst reports, and competitor announcements for a specific sector and synthesizing the "change events" of the last 18-24 months. This is a perfect atomic skill: "Scan tech news from Crunchbase News and TechCrunch for the last 24 months related to [specific industry]. Identify and rank 3-5 potential market shifts or enabling technologies that could create an opening for a new entrant. Provide citations for each."

The output isn't a paragraph for your plan. It's a research brief that you then use to build a compelling "Why Now?" thesis. The plan's claim transitions from "The market is growing" to "The market is growing, and a specific regulatory change (Citation X) has disrupted incumbent workflows, creating a 12-month window for a compliant-first solution like ours."

Problem 3: It Ignores the Implementation Gauntlet

A business plan is not a destination; it's the first map for a perilous journey. Investors want to see that you've thought about the first 100 miles of terrain. A generic plan ends with the launch. A validated plan details the first three critical experiments.

What is the very first assumption you need to test? Is it that you can get interviews with your target customer? That you can build a working prototype in two weeks? That a specific influencer will respond to a cold email? The plan should name these milestones and, ideally, show they've already been started or completed.

This is where the atomic, pass/fail nature of skills in the Ralph Loop Skills Generator shines. If your Claude Code sessions feel like they produce diminishing returns when iterating on strategic content, our analysis of the feedback loop fallacy explains why more iterations do not always mean better output. You don't ask Claude to "write about go-to-market." You create a skill: "Skill: Design a 30-day pre-launch validation sprint. Task 1: Draft a LinkedIn outreach script to secure 5 customer discovery interviews with [ideal customer profile]. Pass Criteria: Script is under 200 words, includes a clear value proposition for the interviewee, and has a call-to-action for a 20-minute call. Task 2: Based on interview synthesis, list the top 3 product features for an MVP. Pass Criteria: Each feature is directly tied to a pain point mentioned by at least 3 interviewees."

Now, Claude is iterating on a concrete, executable validation task, not writing prose about strategy. The output of this skill becomes a core, non-generic part of your plan: "Our first validation sprint, targeting Head of Operations at Series B SaaS companies, secured 7 interviews. The synthesized pain points led us to prioritize three MVP features: (1) automated compliance document tagging, (2) a real-time audit trail dashboard, and (3) one-click reporting for common frameworks."

This demonstrates executional intelligence. It shows you're using AI not as a copywriter, but as a project manager for de-risking your business. This approach aligns with the philosophy of moving beyond one-off prompts to a hub of interconnected AI prompts that manage complex, multi-stage projects. For a deeper look at how Anthropic's Claude handles multi-step autonomous planning, see our guide on Claude Code autonomous planning mode with atomic skills.

How to Build an Investor-Ready Plan with Atomic Validation Skills

Four atomic skills -- hypothesis deconstruction, TAM-to-SOM quantification, scenario-based financial modeling, and pre-mortem risk analysis -- let Claude or Cursor build an evidence-backed plan that survives investor scrutiny.

Forget the prompt "write a business plan." That single, monolithic task is your enemy. It produces a facade. Your new goal is to architect a series of small, verifiable tasks that, when completed, collectively build the evidence for your plan. You are the project lead, and Claude is your analyst, tasked with specific research, synthesis, and validation objectives.

This method turns the planning process from a writing exercise into a due diligence simulation. You're forcing the AI—and by extension, yourself—to answer the hard questions an investor will ask, before they ask them.

Here is a step-by-step method to build a plan that can survive a first meeting.

Step 1: Deconstruct Your Plan into Core Hypotheses

Before opening any AI tool, grab a notebook. Answer this: What are the 5-7 fundamental beliefs your entire business rests upon? These are your core hypotheses. They usually look like this:

These are not sections of a document. They are the load-bearing walls of your business case. Your entire planning effort now revolves around testing and supporting these hypotheses.

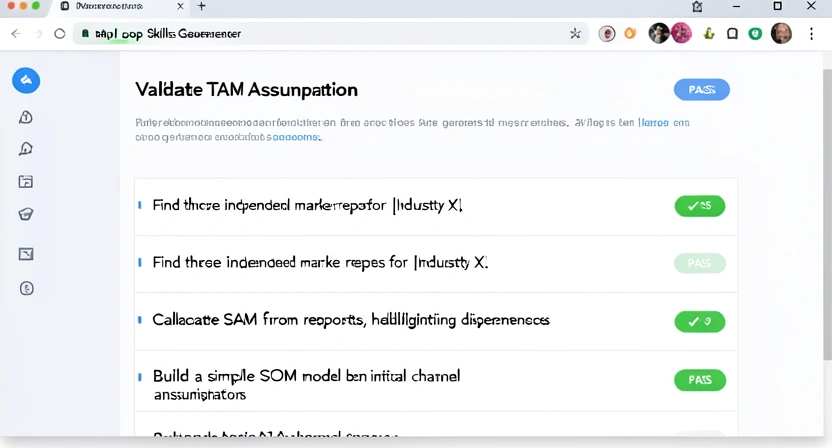

Step 2: For Each Hypothesis, Design an Atomic Validation Skill

This is where you engage the Ralph Loop Skills Generator. For each hypothesis, you create a dedicated "skill." The skill's goal is not to write text, but to produce validated evidence or flag a critical unknown.

Let's take the Market Hypothesis as an example.

Skill Name: Validate and Quantify Serviceable Obtainable Market (SOM) Skill Goal: Produce a defensible SOM estimate with clear sources and logic, identifying the biggest unknown. Task 1: Source TAM Data * Instruction: Find three recent (last 24 months) market research reports or credible industry analyses for the [Your Industry] sector, focusing on [Your Geography]. Use sources like Statista, Gartner, Forrester, or well-cited industry publications. * Pass Criteria: Reports are from at least two different publishers. Each source is linked, and the relevant TAM figure and year are extracted. Any major discrepancies between reports are noted. Task 2: Calculate SAM (Serviceable Addressable Market) * Instruction: Using the most conservative TAM figure, apply filters to define our SAM. Filters are: Customers using [specific technology/platform], companies with [employee size range], and within the [specific sub-vertical]. Estimate the percentage of the TAM each filter applies, citing rationale (e.g., "30% of businesses in this sector use Shopify, based on 2025 industry survey [link]"). * Pass Criteria: SAM is calculated with a clear formula:TAM x Filter1% x Filter2%.... Each filter percentage has a brief justification or source. The final SAM number is presented.

Task 3: Model SOM (Serviceable Obtainable Market) for Year 1

* Instruction: Based on our initial go-to-market strategy (e.g., outbound sales to 500 target companies, content marketing aiming for 10k website visitors/month), estimate what percentage of the SAM we can realistically reach and convert in the first 12 months. Use assumed reach percentages (e.g., 20% of SAM will see our messaging) and conversion rates (e.g., 2% of reached will become customers).

* Pass Criteria: A simple SOM model is built: SOM = SAM x Reach% x Conversion%. The assumptions for reach and conversion are listed explicitly as "Key Unknowns to Test." The output is a plausible Year 1 customer and revenue number.

When Claude runs this skill, it iterates on each task until it passes. The output isn't a paragraph saying "Our market is big." It's a mini-report with sourced data, a clear calculation, and—most importantly—a highlighted list of "Key Unknowns" (our reach and conversion rates). This is gold. You can now say in your plan: "Our conservative SOM for Year 1 is 200 customers generating $600k in revenue. This is based on a TAM of $X from Sources A & B, narrowed to a SAM of $Y. The critical assumption to validate is our ability to achieve a 2% conversion rate from reached prospects, which will be the focus of our Q3 pilot campaign."

You've replaced a generic claim with a sourced, transparent, and testable model. You've also demonstrated strategic thinking by identifying your key risk.

Step 3: Stress-Test Your Financial Model with Scenario Analysis

A generic financial model is a single-path fantasy. An investor-ready model is a tool for exploring uncertainty. Your next skill forces Claude to build not a projection, but an analysis.

Skill Name: Build a Driver-Based Financial Model with Scenario Analysis Skill Goal: Create a simple, editable financial model (in a table) that identifies key drivers and shows outcomes under 3 scenarios. Task 1: Identify 5 Key Business Drivers * Instruction: For a [Your Business Type - e.g., SaaS, Marketplace, E-commerce], list the 5 most sensitive variables that directly impact revenue and costs. (e.g., Monthly Website Visitors, Visitor-to-Signup Conversion %, Signup-to-Paid Conversion %, Average Revenue Per User (ARPU), Monthly Churn Rate). * Pass Criteria: List of 5 drivers. Each has a brief explanation of its impact. Task 2: Build a 12-Month "Base Case" Model * Instruction: Create a table with 12 monthly columns. Using the drivers, build a simple model. Start with an initial value for Driver 1 (e.g., Month 1 Website Visitors = 5,000). Apply monthly growth rates or assumptions to each driver to project forward. Calculate Monthly Recurring Revenue (MRR) and key costs. * Pass Criteria: A clear table exists. Formulas connecting drivers to revenue are logical and shown. The model produces a Base Case year-end MRR. Task 3: Create "Upside" and "Downside" Scenarios * Instruction: Copy the Base Case model. For the Upside scenario, adjust 2-3 key driver assumptions to more optimistic but plausible levels (e.g., conversion rate 25% higher). For the Downside scenario, adjust the same drivers to pessimistic levels (e.g., conversion rate 25% lower). Recalculate. * Pass Criteria: Three separate model tables or one comparative table exist. The specific driver changes for each scenario are documented. The outcome variance (e.g., Downside MRR is 40% lower than Base) is stated.Now, your financial section has immense credibility. You can present the Base Case but immediately say, "Our model is most sensitive to conversion rate and churn. As the scenario analysis shows, a 25% improvement in conversion drives an 80% increase in Year 1 revenue, while a 25% increase in churn cuts it by nearly half. Our first two operational priorities are therefore optimizing our signup funnel and implementing a customer success program to mitigate churn."

You've shown you understand what actually matters in your business, not just how to fill out an Excel template. This level of granular, assumption-driven planning is often where AI-powered business plans miss the mark, focusing on the output instead of the underlying economic engine.

Step 4: Conduct a Pre-Mortem on Your Biggest Risk

Every founder knows their biggest fear. Put it in the plan before the investor does. This skill uses a "pre-mortem" framework to force clear-eyed analysis.

Skill Name: Conduct a Pre-Mortem on [Your Biggest Risk] Skill Goal: Define the single biggest risk to the business and outline a specific, early-warning detection system and mitigation plan. Task 1: Define the "Company Failed" Scenario * Instruction: Imagine it's 18 months from now. The company has failed. Write the 2-paragraph post-mortem from a tech blog headline, specifically citing how [Your Biggest Risk - e.g., failure to secure a key partnership, inability to hire a critical role, a technical scalability wall] caused the failure. * Pass Criteria: A plausible, specific failure narrative is written, directly linking the risk to operational collapse. Task 2: Identify the Early Warning Signals * Instruction: Working backward from the failure, list 3-5 measurable metrics or events that would signal this risk is materializing, long before the actual failure. (e.g., for a partnership risk: "Key partner misses two consecutive quarterly check-in calls," "Integration project timeline slips by > 8 weeks," "Partner's public roadmap de-prioritizes the needed API features"). * Pass Criteria: A list of concrete, observable early-warning signals (EWS) exists. Task 3: Draft the Mitigation "Playbook" * Instruction: For each Early Warning Signal, draft one specific action the team will take if that signal is observed. Assign an owner (e.g., "CEO", "CTO"). (e.g., "If partner misses two check-ins, the CEO will schedule an in-person meeting to reassess commitment and simultaneously initiate outreach to two backup partner candidates."). * Pass Criteria: A simple table matches each EWS with a specific action and owner.Including this in your plan is powerful. It transforms a risk from a vague threat into a managed variable. You tell the investor: "We believe our single biggest risk is X. Here’s how we'll know it's happening, and here's exactly what we'll do about it. We've already identified backup option Y." This demonstrates a level of operational maturity and realism that instantly separates you from the crowd of founders presenting risk-free fairy tales.

Proven Strategies to Operationalize Validation

A living validation backlog, red-team Claude or GPT-4 skills, and a hyper-tactical "first 100 days" execution map transform AI-assisted planning from a one-time document into a continuous evidence-gathering discipline.

Building validation into your planning isn't a one-time event. It's a operational mindset. The goal is to make "seek evidence" a default step for every strategic decision, using AI as a structured thinking partner. Here are advanced tactics to embed this.

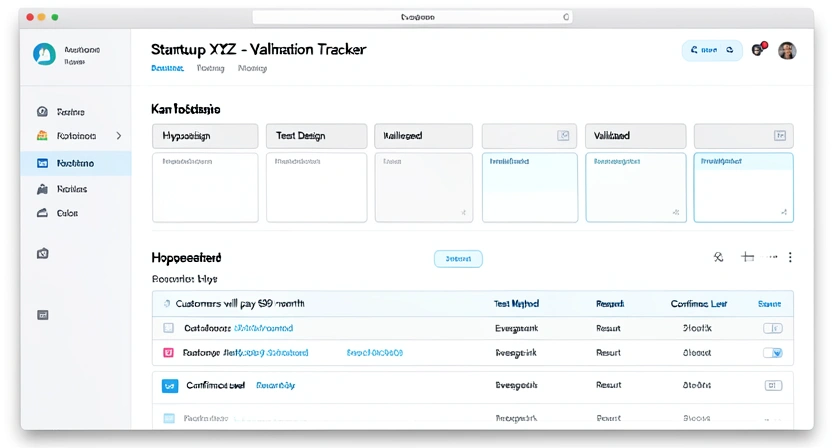

Strategy 1: Create a "Living" Validation Backlog

Your initial plan is snapshot v1.0. The moment you start executing, you'll learn new things. Instead of letting your plan become outdated, treat it as a living document fed by a validation backlog.

Create a simple table (in Notion, Airtable, or even a spreadsheet) with the following columns: Hypothesis, Validation Method (e.g., Customer Interview, A/B Test, Pilot), Success Metric, Evidence Link, Status (Not Started/In Progress/Validated/Invalidated), Date Updated.

Every week, review this backlog with your team (or by yourself). What did we learn this week that changes a hypothesis? What new assumption did we make that needs testing? Use a Claude skill to help synthesize weekly customer feedback or support tickets into potential new hypotheses to add to the backlog. This turns planning from a pre-funding chore into a core operational rhythm.

Strategy 2: Use "Red Team" Skills

Assign Claude a "Red Team" role. After a core section of your plan is drafted (e.g., the Go-to-Market strategy), create a skill where the sole task is to attack it.

Skill Name: Red Team GTM Plan Task: Act as a skeptical investor specializing in our sector. Review the provided GTM plan. List the 3 weakest, most assumptive claims. For each weak claim, propose one piece of evidence or one small experiment that would strengthen it. Frame your feedback as challenging questions. Pass Criteria: Three specific, non-generic weaknesses are identified. Each has a corresponding, practical evidence request or experiment idea.This forces you to confront weaknesses in a safe, private environment before they become public stumbling blocks in a meeting. It's like having a brutally honest co-founder who isn't worried about hurting your feelings.

Strategy 3: Build a "First 100 Days" Execution Map

Investors care about what you'll do with their money tomorrow. Dedicate a section of your plan to a hyper-tactical "First 100 Days" map. Don't use Claude to write fluffy goals. Use it to generate a specific project plan.

Skill Name: Generate First 100 Days Milestone Plan Task: Based on our business model and immediate post-funding priorities (e.g., hire 2 engineers, launch beta, secure first 10 pilot customers), break down the first 100 calendar days into 10-15 concrete, weekly milestones. Each milestone should be a deliverable (e.g., "Beta v0.5 deployed to test environment," "Job descriptions for roles A & B published," "List of 50 target pilot companies finalized"). Pass Criteria: A timeline with 10-15 specific, deliverable-oriented milestones is produced. Milestones are sequenced logically (hiring before complex development, etc.).This shows you're ready to execute, not just strategize. It gives the investor confidence that their wire transfer will immediately convert into tangible progress.

Got Questions About AI Business Plan Validation? We've Got Answers

Common questions about using Anthropic's Claude, OpenAI's GPT-4, GitHub Copilot, and Cursor to build investor-grade business plans with atomic validation skills.

How long does it take to build a validated plan this way?Longer than a single afternoon, but shorter than you think. The initial setup—deconstructing hypotheses and designing the first 4-5 core validation skills—might take a few hours. Running the skills can take another 1-2 hours as Claude iterates. The total time is usually 4-8 hours of focused work, spread over a couple of days. The key difference is that 80% of that time is spent on active thinking, research, and analysis guided by the AI, rather than passive editing of AI-generated text. The output is fundamentally more valuable and will save you dozens of hours in failed investor meetings.

What if my market is too new for reliable data?This is common in frontier tech. The validation skill shifts from "find the report" to "triangulate from proxies." Your task for Claude becomes: "Identify 3-5 adjacent or enabling markets that are measured. Estimate our potential market size by analogy. For example, if building quantum software tools, look at the early market size for GPU programming tools." The pass criteria becomes listing the proxies, the analogy logic, and clearly labeling all estimates as "speculative, based on analogy to Market X." Honesty about uncertainty, coupled with a logical method for estimation, is more credible than pretending precise data exists.

Can I use this for a non-tech business or a non-profit?Absolutely. The framework is universal. A restaurant's core hypothesis might be "People in this neighborhood will pay a premium for authentic Neapolitan pizza." The validation skill would involve tasks like analyzing foot traffic data (from a tool like Placer.ai), surveying local community groups online, and modeling the economics based on nearby comparable restaurants. The principle is the same: break the belief into testable components and seek evidence for each.

What's the biggest mistake people make when starting with this method?The biggest mistake is not being brutally specific in the pass/fail criteria for each atomic task. Vague criteria like "find good data" lead to vague outputs. Force yourself to define what "good" means. Is it "data from a .gov or .edu domain"? Is it "a survey with n>1000 respondents"? Is it "a quote from an industry expert with name and title cited"? The more precise your criteria, the more rigorous Claude's iterations become, and the higher the quality of the evidence you collect.

Ready to build a business plan that survives investor scrutiny?

Ralph Loop Skills Generator turns your AI from a document writer into a validation engine. Stop generating facades and start building evidence-based cases. Design your first atomic validation skill today and see how it forces the critical thinking that makes plans -- and businesses -- truly defensible. If your AI assistant is also sabotaging your team workflow with plausible-but-wrong output, the same atomic validation approach applies to operational planning. Generate Your First Skill<!-- sister-projects-start -->

Other Doved Studio projects

Related tools from the same studio you might find useful:

- Glean: Turn scrolling time into a daily action plan. Capture, process, execute.

- Popout: Create your portfolio in minutes with a single shareable page.

- Larpable: Spot fake founders, guru grifts, and performance entrepreneurship.

- Doved Studio: Studio indie derrière cette app et une dizaine d'autres outils.

ralph

Building tools for better AI outputs. Ralphable helps you generate structured skills that make Claude iterate until every task passes.