Stop Wasting Your AI Budget on Unstructured Prompts

Is your AI assistant burning through your budget? Learn how unstructured Claude Code sessions waste money and how atomic skills with clear pass/fail criteria deliver measurable ROI.

You wouldn't hire a brilliant but chaotic consultant, hand them a vague problem, and pay them by the hour to wander around until they stumble upon a solution. Yet, that's exactly how many developers and solopreneurs are using Claude Code and other powerful AI assistants. The initial excitement of getting a working prototype from a single prompt has given way to a creeping reality: the bill. As usage scales from hobbyist tinkering to core business operations, the financial inefficiency of unstructured prompting is becoming impossible to ignore.

This isn't just about saving a few dollars. It's about the fundamental economics of AI as a tool. When you pay per token—for both your input and the AI's output—every meandering thought, every redundant clarification, and every failed iteration isn't just wasted time; it's wasted money. The industry is waking up to this. Recent discussions around "AI FinOps" are moving the conversation from pure capability to cost-aware implementation. The goal is no longer just "can the AI do it?" but "can the AI do it efficiently and reliably enough to justify the cost?"

The real shift happens when you stop treating the AI as a magic box and start treating it as a deterministic worker. This means moving from open-ended conversations to structured workflows with clear, atomic tasks and unambiguous pass/fail criteria. It's the difference between paying for exploration and paying for execution. This article will break down the hidden costs of your current prompting habits, provide a concrete framework for calculating your actual AI ROI, and show you how a structured approach with atomic skills turns Claude Code from a cost center into a predictable, high-return asset.

Understanding the Real Cost of AI Prompts

Unstructured prompts to Claude, GPT-4, or GitHub Copilot waste 35-50% of tokens on clarification loops rather than producing usable output.

Most developers see the AI cost on their monthly bill as a single, somewhat mysterious number. It's easy to think, "Well, I got a lot of work done, so it's worth it." But that number is the sum of hundreds of individual interactions, each with its own efficiency quotient. To understand the waste, you need to dissect what you're actually paying for.

At its core, you are charged for tokens. Think of tokens as the currency of computation for the model. Every word you type (input tokens) and every word the AI generates (output tokens) costs money. The inefficiency arises because in an unstructured session, a massive portion of those tokens are spent on process rather than product. This includes: * Context Buildup: Re-explaining the problem in each new message because the context wasn't established cleanly from the start. * Clarification Loops: The back-and-forth of "Can you explain what you mean by X?" or "I need you to also consider Y." * Hallucination Tax: Paying for Claude, GPT-4, or GitHub Copilot to generate confident but incorrect code or logic, which you then have to pay again to correct. Anthropic and OpenAI have both acknowledged hallucination as an ongoing research challenge. * Redundant Output: The AI generating long, verbose explanations for simple tasks, or re-stating code you already have. This is especially costly with reasoning-heavy models like Claude Opus or OpenAI's o1.

A study by MIT's Computer Science & Artificial Intelligence Laboratory in late 2025 analyzed developer interactions with coding assistants. They found that in free-form sessions, nearly 40% of all tokens exchanged were part of "meta-conversation"—discussing the task, clarifying intent, or correcting course—rather than producing final, usable artifacts. That's like paying a contractor for 10 hours of work, but 4 of those hours were spent arguing about the blueprint.

The financial impact compounds with task complexity. A simple function might take 3-4 messages. A full feature with tests, error handling, and integration could spiral into a 50-message thread. The cost doesn't scale linearly; it explodes because the "meta-conversation" overhead grows faster than the core task.

| Prompting Style | Task: "Build a login form" | Estimated Token Waste | Primary Cost Driver |

|---|---|---|---|

| Unstructured / Conversational | "Make a login form. Wait, add validation. Actually, use React Hook Form. Can you make it look like X site? The error messages should be red." | High (35-50%) | Constant re-specification, scope creep handled reactively. |

| Semi-Structured / Checklist | "Build a React login form with: 1) Email & password fields, 2) Validation, 3) Submit handler. Now add styling." | Medium (20-30%) | Sequential tasks without integrated validation; styling is a separate, vague phase. |

| Atomic Skill / Structured Workflow | Execute atomic skills: validate-email-field, style-input-with-tailwind, create-form-submit-handler. Each has pre-defined pass/fail tests. | Low (5-15%) | Zero clarification. AI iterates silently against criteria. Payment is for verified output only. |

For developers looking to get more strategic, our guide on effective AI prompts for developers dives deeper into the technical patterns that reduce waste from the first message. If you're experiencing stalls and loops specifically, our breakdown of the Claude Code feedback loop fallacy explains why more iterations rarely mean better results.

Why Unstructured Prompts Are Burning Your Budget

Iterative debugging in conversational AI sessions with Claude or GPT-4 inflates task costs by 200-300% compared to a single well-specified atomic prompt.

The problem with cost inefficiency is that it's often invisible. You get a result, you move on. The bill comes at the end of the month, a lump sum disconnected from individual tasks. But when you start tracing costs back to specific work sessions, three major budget-burning patterns become clear.

The Compounding Cost of Iteration. This is the biggest offender. In a typical session, you ask for a feature. The AI provides a version. You spot a bug or a missing requirement. You ask for a fix. This loop repeats. Every iteration isn't just the new code; it's the AI re-reading the entire growing conversation history (context) and generating another response. The token count balloons. A 2025 analysis by the AI infrastructure firm Modelfarm found that iterative debugging in conversational AI can increase the cost of a task by 200-300% compared to a correctly specified task done in one shot. You're not just paying for the solution; you're paying for every wrong turn along the way. The Hidden Tax of Vagueness. Ambiguous prompts generate ambiguous outputs. "Make it faster" leads to one attempt. "Refactor this function to reduce time complexity from O(n²) to O(n log n)" leads to a targeted, correct attempt. The vague prompt forces the AI to guess your intent, often leading to a technically correct but irrelevant optimization. You then have to clarify, starting a new, costly iteration cycle. This vagueness is the default mode for most users, and it silently inflates every interaction. The Scalability Trap. What feels negligible for a solo developer becomes a financial crisis for a team. If one developer wastes $50 a month on inefficient prompts, it's a footnote. If ten developers do the same, that's $500 a month—$6,000 a year—literally thrown away on conversational overhead. As documented in recent Gartner reports on emerging AI operational practices, enterprises scaling AI-assisted development are finding that unmanaged prompt costs are one of the fastest-growing and least-predictable line items in their cloud budgets. The lack of structure makes forecasting impossible.The urgency isn't about fear; it's about leverage. The money you're wasting on inefficient prompts is money you're not spending on generating more value. It's capital trapped in a inefficient process. For the solopreneur or small team, this waste directly impacts the bottom line and limits how ambitiously you can use AI. You might hesitate to delegate a complex, valuable task because you're afraid of the unpredictable cost spiral. This hesitation is an opportunity cost on top of the direct financial cost.

This financial reality is forcing a maturation in how we use these tools. It's no longer sufficient to be a good "prompt crafter." You need to be a good "prompt economist." This means designing interactions where the value extracted per token is maximized. The businesses and developers who figure this out first will gain a significant efficiency advantage, running circles around competitors who are still treating their AI budget like a bottomless pit. For those building a business on their own, mastering this efficiency is non-negotiable. Our resource on AI prompts for solopreneurs focuses on this exact mindset for resource-constrained builders.

How to Calculate Your Actual AI ROI and Build Cost-Effective Skills

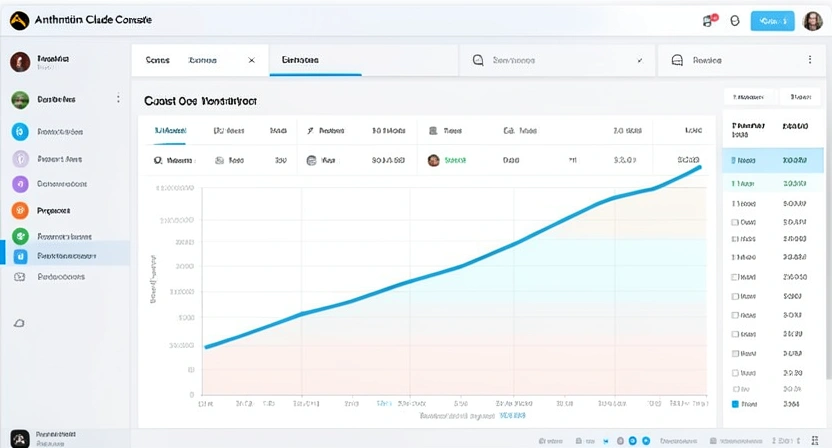

A one-week cost audit using the Anthropic Console or OpenAI dashboard reveals your actual cost-per-task and exposes where atomic skills can cut token spend by 70% or more.

You can't manage what you don't measure. The first step to stopping the budget bleed is to understand it. This isn't about complex accounting; it's about a simple, eye-opening audit of your own work.

Step 1: The One-Week Cost Audit. Pick a recent, non-trivial task you completed with Claude Code. Something like "add user profile editing" or "build a data export function." Now, go into your provider's dashboard (like the Anthropic Console). Most offer session logs or API logs. Find that conversation thread. Note: * Total Input Tokens: What you spent to explain, clarify, and iterate. * Total Output Tokens: What the AI spent generating code, explanations, and wrong answers. * Total Cost: The dollar amount for that session.Write this down. Now, estimate the business value of that output. Be honest. Was it a $500 feature? A $1000 time-saver? This gives you your first ROI data point: Value / Cost. For many, this initial number is sobering.

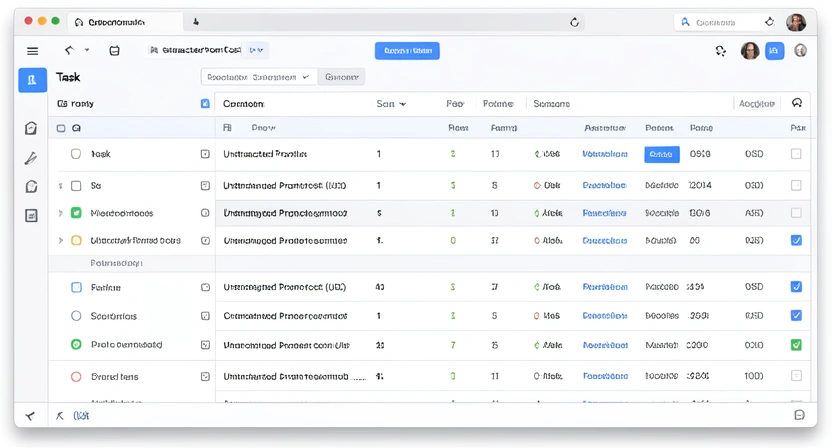

Step 2: Redefine the Task as Atomic Skills. Take the same task and break it down before talking to the AI. Don't think in features; think in verifiable units of work. For "user profile editing," the atomic skills might be:fetch-user-profile-data (Pass: Returns a structured object from the DB/API).create-editable-form-ui (Pass: Renders a form with pre-filled data from skill #1).validate-profile-update-input (Pass: Returns clear error messages for invalid email, bio length, etc.).write-profile-update-api-call (Pass: Successfully PATCHes data to the correct endpoint).Each skill has a single, clear objective and a binary pass/fail criterion. The AI isn't asked to "build the edit page." It's asked to execute skill #1, then #2, etc., iterating on each until it passes.

Step 3: Build and Price the Atomic Workflow. Using a structured generator—this is exactly what the Ralph Loop Skills Generator is for—you formalize these skills. The key financial benefit is isolation. When the AI works onvalidate-profile-update-input, it's not re-reading the entire conversation about the UI or the API. Its context is focused solely on validation logic. This drastically reduces token consumption. Furthermore, because the pass/fail test is automated (or immediately obvious), there are no "Is this right?" clarification loops. The AI works silently, iterating, and only moves on when the test passes.

Now, estimate the cost of this atomic workflow. The input prompt is the skill definition—concise and precise. The output is only the code that passes the test, not paragraphs of explanation. You'll find the token count is a fraction of the unstructured session.

Step 4: Compare and Calculate the New ROI. Plug the new, lower cost into your ROI formula. The value of the output (the working feature) remains the same, but the cost has dropped. Your ROI has increased, often dramatically.| Metric | Unstructured Session | Atomic Skill Workflow | Impact |

|---|---|---|---|

| Total Tokens | 45,000 | 12,000 | 73% Reduction |

| Direct Cost | $2.25 | $0.60 | $1.65 Saved per task |

| Time to Completion | 45 min (active chat) | 15 min (setup + review) | 67% Time Saved |

| Output Quality | Functional, but may have hidden issues | Each component verified; higher confidence | Risk Reduction |

| ROI (on a $100 value task) | ~44x ($100/$2.25) | ~167x ($100/$0.60) | ~380% ROI Improvement |

This process transforms AI from a creative partner you chat with into a deterministic engine you direct. The financial predictability is transformative. If you know that a "CRUD endpoint" skill package typically costs $0.80-$1.20 and delivers a $50 value, you can delegate those tasks without financial anxiety. This is how you scale.

The tools are evolving to support this. Beyond our own generator, platforms like Windmill and n8n are adding low-code AI workflow steps, though they often lack the developer-centric focus on atomicity and testing. The principle remains: structure precedes scale. For a comprehensive collection of starting points, our hub of AI prompts provides templates that are designed with this efficient, structured philosophy in mind.

Proven Strategies to Lock in High ROI and Scale Predictably

Reusable skill libraries, per-feature prompt budgets, and decoupling exploration (Haiku) from execution (Claude Sonnet or Opus) are the three pillars of predictable AI spend.

Once you've internalized the atomic skill approach and seen the ROI jump, the next step is to institutionalize this efficiency. This turns cost savings into a permanent competitive advantage and unlocks reliable scaling.

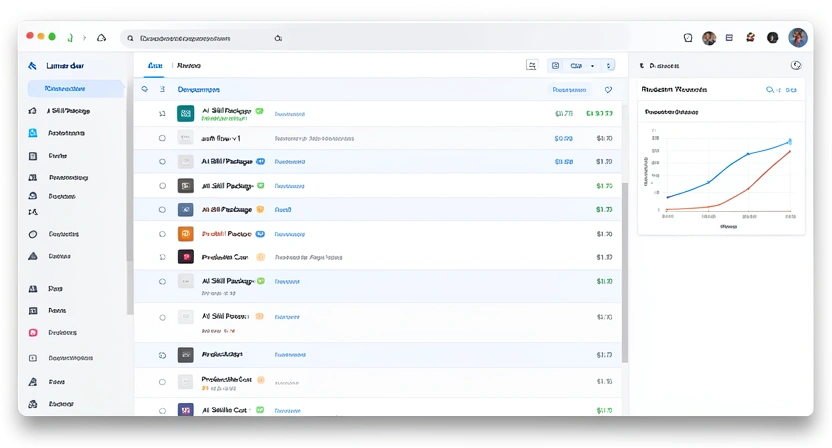

Strategy 1: Create a Reusable Skill Library. The highest ROI skills are the ones you use more than once. Don't rebuild a user authentication flow from scratch for every project. Build or acquire a package of atomic skills foroauth-setup, session-management, and password-reset. The cost to create this library is an investment. The first project might bear the full cost, but the second, third, and tenth projects use it at near-zero marginal cost. Your ROI approaches infinity. Treat these skills like internal npm packages. Version them, improve them, and share them across your team. This is where the real financial leverage is found.

Strategy 2: Implement a "Prompt Budget" per Feature. This flips the script on financial management. Instead of getting a bill and wondering what happened, you start with a budget. In your project planning, break a feature down into its atomic skills. Based on historical data (which you now have from your audit), assign a conservative token/cost estimate to each skill. Sum them. That's your prompt budget for the feature. This does two things: it forces precise scoping before any cost is incurred, and it gives you a clear metric for success. Coming in under budget becomes a target. This practice, often called "AI FinOps" in enterprise circles, is how large companies like Intuit and IBM are beginning to govern their massive AI expenditures, as noted in their recent technical blog posts on scalable AI adoption. For teams using Claude Code in autonomous mode, our article on the AI overhead trap digs into where developer time gets silently consumed.

Strategy 3: Decouple Exploration from Execution. Sometimes you need to explore. You're researching a new library or brainstorming architecture. That's fine. The key is to ring-fence this activity and not let its costs bleed into production work. Use a separate, lower-cost model (like Anthropic's Claude Haiku or OpenAI's GPT-4o mini) for open-ended exploration and brainstorming. Once you have a viable path, then formalize it into atomic skills for the more capable (and expensive) model like Claude Sonnet or Claude Opus to execute. Tools like Cursor and GitHub Copilot also support model-switching workflows, letting you choose the right engine per phase. Pay for creativity with cheap tokens, and pay for precision with expensive ones. This hybrid model acknowledges that not all AI use is the same and optimizes cost for each phase.

My own experience as a lead developer on a distributed team showed this. We had a junior dev who was brilliant but whose AI costs were 3x higher than anyone else's. The work was good, but the path was chaotic. We didn't scold them; we gave them a library of pre-built atomic skills for common tasks (API calls, UI components, data transformers). Their costs normalized to the team average within a week, and their output velocity increased because they were no longer stuck in clarification loops. The structure set them free.

The ultimate goal is to reach a point of predictable, high-return AI operations. You should be able to look at a project roadmap and estimate its AI-assisted development cost with the same confidence you estimate server hosting fees. This predictability is what turns AI from a scary, variable expense into a trusted, scalable workforce. It allows solopreneurs to punch far above their weight and enables teams to deploy AI across their workflow without CFO panic.

Got Questions About AI Budgets and ROI? We've Got Answers

How often should I audit my AI spending? Start with a monthly review for the first quarter. Once you've implemented atomic skills and have a baseline, you can move to quarterly audits unless you're rapidly scaling or changing your workflow. The key is to spot trends, not just totals. Look for projects or team members with anomalously high cost-per-task ratios—that's where you'll find the most impactful opportunities for optimization. What if my tasks are too creative or open-ended for atomic skills? Not everything needs to be atomic. The framework is a tool for efficiency, not a straitjacket. Use atomic skills for the executable components of a creative project. For example, writing a marketing email is creative. But "extract key value props from the product doc," "generate 5 subject line variants," and "check grammar and tone" can be atomic skills. You guide the creative direction, then use structured skills to efficiently produce the concrete pieces. Can I use this approach with other AI models besides Claude Code? Absolutely. The principle of atomic tasks with pass/fail criteria is model-agnostic. It works with OpenAI's GPT-4 API, Google's Gemini, GitHub Copilot, or Cursor—any model that can follow instructions and iterate. The cost savings might vary because models have different pricing and context-window behaviors, but the fundamental reduction in wasted tokens from clarification and vagueness will apply universally. The Ralph Loop generator's output is primarily structured English instructions that can be adapted. For a direct comparison of how Claude and ChatGPT handle structured tasks differently, see our Claude vs ChatGPT breakdown. What's the biggest mistake people make when trying to control AI costs? The biggest mistake is focusing only on choosing a cheaper model. While model choice matters, it's a blunt instrument. A 20% cheaper model used inefficiently will still waste more money than a 20% more expensive model used with precision. The granular, high-leverage action is improving the efficiency of your prompts and workflows. Fix the process first, then optimize the tooling cost. Cutting corners on prompt quality to save a few input tokens is a false economy if it leads to expensive, wrong outputs.Ready to turn your AI spend into a measurable investment?

Ralph Loop Skills Generator helps you build the atomic, testable skills that eliminate wasteful prompting and lock in high ROI. Stop guessing and start measuring. Generate your first cost-effective skill today. Generate Your First Skill<!-- sister-projects-start -->

Other Doved Studio projects

Related tools from the same studio you might find useful:

- Glean: Turn scrolling time into a daily action plan. Capture, process, execute.

- Popout: Create your portfolio in minutes with a single shareable page.

- Larpable: Spot fake founders, guru grifts, and performance entrepreneurship.

- Doved Studio: Studio indie derrière cette app et une dizaine d'autres outils.

ralph

Building tools for better AI outputs. Ralphable helps you generate structured skills that make Claude iterate until every task passes.