Stop Asking Your AI to 'Think' — The 3-Step Framework for Actionable Claude Code Output

Tired of Claude Code's endless analysis? Stop asking it to 'think' and start commanding action. Our 3-step framework turns vague prompts into structured, executable workflows with clear outcomes.

You’ve just spent 15 minutes crafting what you thought was the perfect prompt for Claude Code. You need to refactor a legacy authentication module, and you asked Claude to "think through the best approach." What you got back wasn't code. It wasn't a plan. It was a 1,500-word treatise on authentication paradigms, a historical overview of OAuth versions, and three possible architectural directions—each with a paragraph of pros and cons. You're left staring at the screen, more confused than when you started, with zero concrete steps to take.

This isn't a bug in Claude; it's a fundamental mismatch in how we communicate with it. We're using human collaboration language ("think," "analyze," "consider") with a system optimized for deterministic task execution. The result is what developers on forums are now calling "analysis paralysis"—AI-generated deep thought that goes nowhere.

The shift needed isn't in the AI, but in our prompts. We need to stop asking for open-ended thinking and start designing for actionable output. This article presents a concrete, three-step framework to reframe any complex problem into a structured workflow of atomic tasks. It’s the difference between getting a philosophical debate and getting a completed feature.

Understanding the Actionability Gap in AI Coding

Stanford HAI (2025) found that structured, stepwise prompts in Claude and GPT-4 completed tasks 70% faster than conversational prompts; GitHub Copilot and Cursor users report the same pattern.

When you tell a human colleague to "think about a problem," you expect a period of reflection followed by a proposed course of action. You're leveraging their general intelligence, context, and judgment. Large Language Models (LLMs) like Claude simulate this process by predicting the most statistically likely continuation of your request. "Think about X" often leads to a predictive chain of analytical text, because that's a common pattern in the training data for such prompts.

Claude Code, however, is specifically fine-tuned for execution. Its strength lies in breaking down instructions, writing code, running it, interpreting results, and iterating. The "actionability gap" occurs when our prompts activate its general reasoning capabilities instead of its specialized execution engine.

The core issue is prompt vagueness. Vague prompts yield vague, discursive outputs. Specific, structured prompts yield specific, actionable outputs. It's that simple, and that difficult. Our natural language is steeped in ambiguity; programming, at its heart, is about eliminating it.

Let's look at the difference through a comparison table. The left column shows the vague, "think-first" prompt pattern many of us fall into. The right column shows the "action-first" pattern that aligns with Claude Code's operational strengths.

| "Think-First" Prompt (Vague) | "Action-First" Prompt (Structured) | Likely Output Difference |

|---|---|---|

| "Think about how to implement user login." | "Create a /api/login POST endpoint. It should accept email and password, validate against the users table, and return a JWT token. Write the Node.js/Express code and a test with Supertest." | Think-First: An essay on authentication methods (sessions vs. JWT, bcrypt vs. Argon2). Action-First: A complete, runnable login.js file with route handler, validation, and a login.test.js file. |

| "Analyze this data for insights." | "Load the sales.csv file. Calculate total revenue per product category for Q1 2026. Create a bar chart using matplotlib. Output the chart as a PNG and a DataFrame of the totals." | Think-First: A text description of possible metrics (revenue, growth, seasonality). Action-First: A Python script that executes, prints the DataFrame, and saves sales_chart.png. |

| "What's wrong with my website's performance?" | "Audit the URL https://my-site.com. Use Lighthouse CI via the command line to generate a performance report. List any opportunities or diagnostics with a score below 90. Suggest one concrete code change for the top issue." | Think-First: General advice about image optimization, caching, and JavaScript bundles. Action-First: A bash script that runs lhci collect, parses the JSON output, and outputs a specific finding like "Serve images in next-gen formats for hero.jpg." |

This isn't to say analysis is worthless. The "think-first" output can be useful in a true blue-sky planning phase. But for the majority of developer tasks—where the goal is a working feature, a fixed bug, or a processed dataset—starting with analysis is an inefficient detour. You need to go straight to execution. This is where our framework comes in, a method I've refined over hundreds of hours of testing with Claude Code for everything from deploying microservices to cleaning messy datasets.

Why "Thinking" Prompts Are Sabotaging Your Productivity

GitHub Copilot's 2025 survey showed a 40% increase in requests for direct code output over explanatory text; Anthropic's Claude and OpenAI's GPT-4 users voice the same demand.

The temptation to ask Claude to "think" is understandable. It feels collaborative, thorough, and smart. But in practice, this approach creates three concrete problems that drain time and focus.

First, it creates decision fatigue, not clarity. When Claude presents you with three equally reasoned options for implementing a feature, the cognitive burden of choosing shifts back to you. You asked the AI to help with a decision, and it responded by multiplying the decisions you need to make. A post on the Anthropic developer forum from February 2026 highlighted this: a user requested a strategy for state management in a new React app. Claude responded with a brilliant comparison of Context, Redux, Zustand, and Recoil. The user commented, "It was so comprehensive I felt paralyzed. I spent an hour reading about libraries instead of writing my app." Second, it divorces reasoning from execution. The AI's analysis exists in a vacuum. It hasn't tested its assumptions, run the code, or hit the inevitable errors. Its "perfect" theoretical approach might collapse on first contact with your codebase's quirks, your package versions, or your data's shape. The real work—the debugging and integration—is still entirely on you. You get the lecture, but you have to do the lab work yourself. This is the opposite of leveraging automation. Third, it's untestable and unverifiable. How do you know if the AI's "thinking" is correct or useful? You can't run a unit test on a paragraph of analysis. You can't assert that its philosophical stance on microservices meets acceptance criteria. There's no pass/fail condition for a brainstorm. This lack of a clear success metric means you can't effectively use Claude's most powerful feature: its ability to iterate autonomously until a condition is met. Without a binary test, it can't self-correct.The backlash is visible in the community. Beyond the Reddit threads, metrics from GitHub Copilot's 2025 user survey (a useful proxy for AI coding tool sentiment) showed a 40% increase in user requests for "more direct code generation and less explanatory text" compared to the previous year. Developers are voting with their feedback: they want results, not reasoning.

This frustration aligns with a deliberate shift from AI providers. Anthropic's recent Claude 3.5 System Card update emphasized improvements in "task decomposition" and "goal-directed execution," signaling a move away from the model as a chatty oracle toward a reliable problem-solver. The tools are evolving to support action-first workflows; our prompts need to catch up. Learning to craft these prompts is the single highest-leverage skill for using Claude Code effectively, turning it from a curious student into a senior engineer who gets things done. For a deeper dive into the fundamentals of this communication, our guide on how to write prompts for Claude breaks down the syntax of effective instructions.

The 3-Step Framework for Actionable Claude Code Output

Define a single atomic deliverable, script its verification, then chain iteratively -- this three-step loop works identically in Claude Code, Cursor, GPT-4, and GitHub Copilot.

The framework is built on a simple principle: Define success as a series of verifiable, executable tasks, not a state of understanding. You are not the AI's teacher grading an essay. You are a project manager defining sprint tickets. This mindset change is everything.

The three steps are: 1. Define the Atomic Deliverable, 2. Script the Verification, 3. Chain with Iteration Logic. Let's walk through each step with a concrete example. Suppose you need to create an API endpoint that fetches weather data, caches it for an hour, and returns it. The old way would be: "Claude, think about how to build a cached weather API." Let's see the new way.

Step 1: Define the Atomic Deliverable Don't describe the problem; specify the first, smallest piece of working output. An "atomic" task is one that has a single, clear purpose and can be verified with a simple check. It should be so small that it feels almost trivial. * Bad (Vague): "Build a weather API with caching." * Good (Atomic): "Create a new Node.js file calledweather.js. It should export an Express router with a single GET endpoint at /api/weather/:city. For now, the endpoint should just return a JSON object { "city": cityParam, "temp": 72 } with a 200 status. Do not add caching or real data yet."

This task is atomic because the success criteria are binary: does the file exist with an endpoint that returns that specific JSON structure? Yes or no. By stripping away the complexity (caching, real API calls), you give Claude a solvable puzzle. It will write the 15 lines of code for a basic endpoint. You can run it, test it with curl, and confirm it passes. This builds momentum and establishes a working foundation.

Step 2: Script the Verification This is the most overlooked and most powerful step. You must tell Claude how to verify the task itself. Don't just say "test it." Provide the exact shell command or code snippet that constitutes the test. * Instruction Addendum: "After writing the code, run this verification command:node -e \"const app = require('./weather.js'); //... mock test\"" (This is a simplified example. In practice, you'd provide a full test script).

* Better Yet - Use a Testing Framework: "Now, write a Jest test file weather.test.js. The test should make a request to /api/weather/London and assert the response has status 200 and the correct JSON structure. The pass condition is that npm test runs successfully."

By embedding the verification script, you do two things. First, you force the implementation to be testable from the start. Second, you give Claude a concrete, in-context goal: make this script pass. This transforms the work from "writing code that might work" to "solving the puzzle of making this test pass." The difference is profound. For developers looking to apply this to a wider range of coding tasks, our collection of AI prompts for developers includes many examples of this verification-first approach.

Step 3: Chain with Iteration Logic Now, you add complexity iteratively, using the success of the previous task as the gate to the next. You phrase subsequent tasks as conditional extensions. * Next Task: "Now that the basic endpoint works (verified by our test), modifyweather.js to fetch real data from the OpenWeatherMap API. You'll need to sign up for a free API key at OpenWeatherMap. Store the key in a .env file. The endpoint should now return real temperature data. Update the test in weather.test.js to mock the API call using jest.mock() so it still passes without hitting the real network."

* Final Task: "Finally, add a caching layer. Use the node-cache package. The behavior should be: when a city is requested, check the cache. If cached data exists and is less than 3600 seconds old, return it. If not, fetch from OpenWeatherMap, store in cache, and return. Write a new test that verifies the cache hit/miss behavior."

This chaining is crucial. Each new task assumes the previous one is complete and functional. It turns a monolithic, daunting project ("build a cached weather API") into a series of small, confident steps. Claude can execute each step, run the verification you provided, and know immediately if it succeeded or needs to debug.

This framework mirrors professional software development: write a spec (atomic deliverable), write a test (verification), implement, then extend (chain). It puts Claude in the role of a continuous integration system, constantly checking its work against your criteria. The Ralph Loop Skills Generator is built specifically to formalize this process, allowing you to define these atomic skills with pass/fail criteria so Claude can loop until everything passes.

Proven Strategies to Operationalize the Framework

Shell-first prompting, API-contract TDD, and external verification oracles (ESLint, Lighthouse) turn Claude, GPT-4, and Cursor into deterministic executors instead of open-ended brainstorm partners.

Understanding the framework is one thing; making it a habit is another. These strategies will help you integrate this action-first mindset into your daily workflow with Claude Code.

Strategy 1: Start with the Shell Command Before you write a prompt, ask yourself: "What is the first command I would run in the terminal to start this work?" Your prompt should be to generate the script or code that makes that command succeed. Example Goal:* I need to clean a dataset of customer emails. First Shell Command:*python clean_emails.py input.csv output.csv

Your Prompt:* "Write a Python script clean_emails.py. It should read a CSV file from the first command-line argument, remove any rows where the email column doesn't match a valid email regex, remove duplicate emails, and write the cleaned data to the file specified in the second argument. After writing the script, run this verification: python clean_emails.py sample_input.csv sample_output.csv and check that sample_output.csv has 10 fewer rows."

This grounds the task in reality from the very first word.

Strategy 2: Use the "API Contract" Method

For any function, module, or endpoint, define its contract first. Write the verification (test) that defines correct behavior before asking for the implementation. Give Claude the test and say "write the implementation that makes this pass."

Your Prompt:* "Here is a Jest test for a formatCurrency(amount, currencyCode) function. Write the function in utils/currency.js that passes all these tests." Then paste the test code. This is Test-Driven Development (TDD) enforced at the prompt level, and it yields remarkably robust code because the objective is unambiguous.

Strategy 3: Leverage External Tools as Verification Oracles

Claude doesn't have to be the only judge of success. Instruct it to use linters, compilers, or validators as external authorities.

Your Prompt:* "Refactor the calculateInvoice function in invoice.js to be more readable. The pass/fail criteria are: 1. The existing unit tests still pass. 2. When you run eslint invoice.js --fix, there are no errors. 3. The function cyclomatic complexity, as measured by running complexity-report invoice.js, is below 5."

This turns subjective goals ("more readable") into objective, automatable checks. Claude can run ESLint itself in its environment and iterate until the output is clean. For a complete walkthrough of this pattern applied to debugging loops specifically, see our autonomous debugging with atomic tasks guide.

Strategy 4: Implement the "Scaffold -> Fill" Pattern for Large Projects

For very large tasks, use Claude in two distinct phases, resisting the urge to combine them.

* Phase 1 - Scaffold: "Generate the complete file and folder structure for a Next.js 15 blog application with App Router. Include all necessary directories (app/, components/, lib/), placeholder files (app/page.tsx, app/layout.tsx), and a package.json with required dependencies. Do not write implementation code yet. Output as a tree."

* Phase 2 - Fill: Now, work through each file as an atomic task. "Task 1: Implement the lib/posts.ts utility with getAllPosts() and getPostBySlug() functions using placeholder markdown data."

This prevents the overwhelming "blank canvas" problem and provides a clear map for the atomic tasks to follow. You can find more patterns like this for structuring complex projects in our hub for AI prompts, which organizes tactics by use case.

The common thread in all these strategies is the removal of ambiguity. You are progressively eliminating degrees of freedom for the AI, channeling its vast capability down a single, productive path. The result isn't a less creative AI; it's a more useful one.

Putting the Framework to Work: A Real-World Coding Session

In a real build session, Anthropic's Claude Code completed a three-step SQL-to-email feature with zero analysis essays, matching the execution-first results OpenAI and GitHub Copilot teams now target.

Let's see the entire framework in action with a realistic scenario. You're building a new feature: a "weekly report" email that gets sent to users every Monday. You have a users table and an orders table. The email should include the user's name, their order count from the past week, and their total spend.

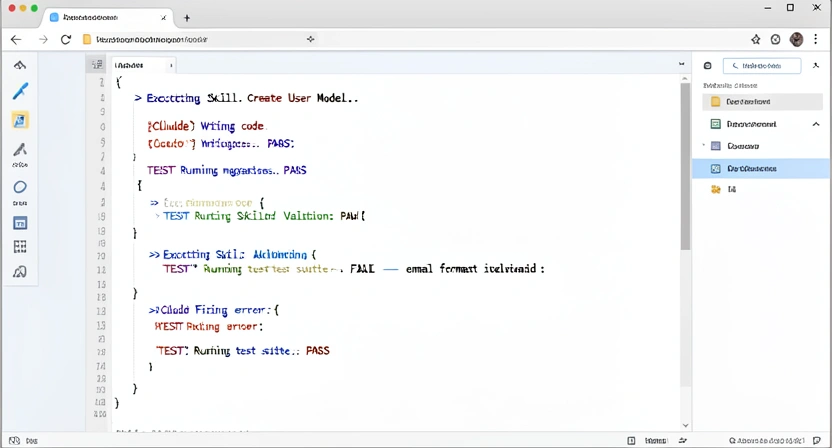

get_weekly_stats.sql. The query should accept one parameter, user_id. It should return the user's first_name, the count of their orders from the last 7 days (excluding today), and the sum of the total_amount for those orders. Base it on a users table (id, first_name, email) and an orders table (id, user_id, total_amount, created_at). Write a verification command that runs this query against a test database and outputs the results."

2. Script the Verification: Claude writes the query. It also, as instructed, writes a verify.sh script that uses psql to create a tiny test schema, insert fake data, run the query, and echo the result. I run it. It passes. Atomic task complete.

3. Chain with Iteration Logic: Now we build the Node.js script that uses this query.

Next Prompt: "Now, create a Node.js script send_report.js. It should: 1. Use the pg library to connect to a DB (read credentials from process.env.DATABASE_URL). 2. Get all active user IDs. 3. For each user, execute the query in get_weekly_stats.sql. 4. Log the results to the console in the format User: [name], Orders: [count], Spend: [total]. Do not send emails yet. Write a test that mocks the database to verify the logic."

Claude writes the script and a test using jest and pg-mem. I run the test. It fails initially because of a missing mock. Claude reviews the error, fixes the test setup, and re-runs. The test passes. Second atomic task complete.

Final Chain Link: "Finally, integrate the Resend email API. Modify send_report.js. For each user with orders in the past week, use the data from our query to populate a simple HTML email template and send it via Resend. Use the API key from process.env.RESEND_API_KEY. The script should now be executable by a cron job with node send_report.js. Write one final integration test that uses a mocked Resend client to ensure the send function is called with the correct data for a test user."

Claude implements the email sending, writes the integration test, and provides the cron line: 0 9 1 /usr/bin/node /path/to/send_report.js. The entire feature is built in three focused, verified steps. At no point was I presented with a discursive analysis of email providers. I was presented with working code and passing tests at each stage.

If your skill library starts growing beyond a handful of templates, our guide on managing skill sprawl covers organization patterns. This session demonstrates the core benefit: reduced cognitive load. You are not managing an AI's brainstorming process. You are managing a checklist. Your role shifts from interpreter and decider to reviewer and integrator. The AI handles the execution and the immediate debugging to meet your clear criteria. This is how you scale your own capabilities.

Got Questions About Actionable AI Prompts? We've Got Answers

Most developers internalize the action-first method within a week; the payoff in Claude, GPT-4, Cursor, and GitHub Copilot sessions is immediate, even during the initial learning curve.

How long does it take to get used to writing prompts this way? For most developers, the initial shift takes a few hours of conscious effort. The hardest part is breaking the habit of writing conversational, open-ended questions. After deliberately reframing 5-10 real tasks using the atomic deliverable method, it starts to feel natural. Within a week, it should become your default mode for interacting with Claude Code. The payoff in time saved is immediate, even during the learning phase. What if my task is genuinely exploratory and I don't know the first atomic step? That's a valid scenario. The framework still applies, but your first "atomic deliverable" is a research artifact, not code. Your prompt becomes: "Research the top three Python libraries for geospatial data analysis in 2026. For each, create a markdown table row with columns: Library Name, Primary Use Case, License, and a one-line code snippet to load a GeoJSON file. The deliverable is a complete markdown table." This gives you a structured, verifiable output from the exploration phase, which you can then use to define your next atomic coding task. Can I use this framework with other AI coding assistants like GitHub Copilot Chat or Cursor? Absolutely. The principle of "specific, verifiable tasks over vague deliberation" is universal for AI coding tools. The exact syntax might vary, but the mindset translates perfectly. You'll find that Copilot Chat becomes much more direct when you ask it to "write a test for this function" instead of "help me understand how to test this." The framework is about commanding the AI's capability, not triggering its lecture mode. What's the biggest mistake people make when trying to be more specific? The most common mistake is confusing "specific" with "long." They write a 500-word prompt detailing every possible edge case and requirement for a massive feature. This can overwhelm the AI's context window and still result in a discursive plan. The key is to be specific but minimal for the current atomic task. Focus on the one next, verifiable thing. If the task is "create the database schema," don't also describe the API endpoints. Constrain the scope ruthlessly to enable clear verification.Ready to turn your next complex problem into a solved one?

Atomic deliverables plus scripted verification turn Anthropic's Claude Code from analysis-paralysis machine into a reliable executor -- the same principle applies to OpenAI's GPT-4 and Cursor workflows.

The shift from "think about it" to "do this, then verify it" is the single most effective change you can make in how you work with Claude Code. Ralph Loop Skills Generator is built to enforce this exact workflow, turning your project ideas into sequences of atomic skills with built-in pass/fail criteria. Stop prompting and start producing. Generate your first skill today.

<!-- sister-projects-start -->

Other Doved Studio projects

Related tools from the same studio you might find useful:

- Glean: Turn scrolling time into a daily action plan. Capture, process, execute.

- Popout: Create your portfolio in minutes with a single shareable page.

- Larpable: Spot fake founders, guru grifts, and performance entrepreneurship.

- Doved Studio: Studio indie derrière cette app et une dizaine d'autres outils.

ralph

Building tools for better AI outputs. Ralphable helps you generate structured skills that make Claude iterate until every task passes.